If you’re starting with digital transformation, you might ask what “cloud native” is and why it’s essential. The article delves into cloud-native meaning and the crucial points to remember. Cloud-native describes an approach focused on how applications are built, designed, managed, and made available by exploiting the advantages of cloud computing and using microservice architecture. This type of architecture makes the application highly flexible and easy to adapt to a cloud architecture by efficiently allocating resources to every service used.

Many companies, including Netflix and Uber, have hundreds or thousands of cloud-native applications in production and are continually deploying new ones. This lets these organizations adapt to market situations fast, as they can readily update sections of live apps and grow them as needed. So what are you waiting for, let’s get started.

What Is Cloud Native?

The term “cloud native” can have a wide variety of interpretations. Netflix, for example, which used cloud technology to transform itself from a mail order business into one of the largest consumer on-demand video delivery networks, invented the phrase “cloud native” 10 years ago. As the company that popularized the concept of “cloud-native,” Netflix set the standard for how the industry should be developing software today.

The phrase “cloud native” describes a way of building software that makes use of containers. It includes programs that were built and improved with the aid of cutting-edge instruments like microservices, Kubernetes, and DevOps.

1. Why Is Cloud Native So Important?

Essentially, cloud-native refers to a means to boost business velocity. It is done by reorganizing your teams to make the most of the automation and scalability provided by cloud-native technologies such as Kubernetes.

1.1. Cloud Native Architecture Pattern

- Pay-as-you-go: Scale resources when required, optimizing them to the core.

- Self-service infrastructure: Apps are automatically realigned to suit the underlying infrastructure.

- Managed services: Manage the cloud infrastructure from migration and configuration.

- Globally distributed architecture: Install and manage software across the infrastructure.

- Resource optimization: Spin up resources and pay only for the ones used.

- Amazon autoscaling: Abstract each scalable layer and scale specific resources.

- 12-factor methodology: Facilitates seamless collaboration with developers working on the same app.

- Automation and IaC: Maintain the infrastructure at the desired state.

- Automated recovery: Incorporate resilience into the apps right from the beginning.

- Immutable infrastructure: Administrators can replace problematic servers without disturbing the app.

1.2. Benefit Of Cloud Native Architecture

- Auto provisioning

- Faster release

- Optimized cost

- High scalability

- Superior customer experience

- Vendor agnostic

- Simplified IT management

- DevOps culture

- Fault tolerant / Self healing

2. Key Principle Of Cloud Native Development

- Microservices: A microservices architecture is an application development approach in which a large application is built as a suite of modular components or services.

- Containerization: Containers are a type of software that can virtually package codes and isolate applications for deployment.

- Continuous Delivery: Continuous delivery is a software delivery approach in which a development team produces and tests code in short but continuous cycles.

- DevOps: It is a methodology that promotes better communication and collaboration between development and operations teams.

“Cloud native is an approach to building and running applications that fully exploit the advantages of the cloud computing model.”

Source: Pivotal

What Is A Cloud-native Application?

Cloud-native apps are decoupled services that come in the form of portable, lightweight containers that can be quickly scaled up or down in response to fluctuating demand. These apps are built to make use of the inherent benefits of a cloud computing software delivery architecture. They are hosted and operate in the cloud. What we call a “native app” is software that has been designed from the ground up for usage on a particular operating system or mobile device.

In the cloud, applications are often built with a microservice architecture. The design is flexible and adaptive to a cloud environment since it allows resources to the many services the app makes use of in an effective manner.

Cloud-native apps are used by DevOps proponents because of their capacity to foster corporate agility. They differ from conventional cloud-based monolithic apps in their design, construction, and distribution. Shorter application life cycles, increased resilience, manageability, and observability—are just some of the characteristics of cloud-native apps.

Elements Of A Cloud-native App

Before we proceed with the details of cloud-native app architecture, let’s go through the elements of a cloud-native app:

- Application design

- API exposure

- Operational integration

- DevOps

- Testing

1. Application Design

The ability to offer and iterate on application functionality quickly is one of the most important needs for cloud-native apps. With only one executable to install on a server, this makes deployment simpler, but development is far more difficult. Any code modification necessitates a complete executable rebuilding.

The microservices strategy, popularized by video streaming company Netflix, lessens the effort of integration and testing by altering the executable deployment model. Microservices break down a single application into several independently running functional components rather than a single huge executable.

This deconstruction makes it possible to update and deploy each feature component independently of the other application components. In consequence, this lessens a number of the monolithic architecture’s problems, such as:

- Due to the autonomous operation of each microservice executable, each microservice may be upgraded individually.

- Integration overhead is decreased, accelerating the pace of capability rollout.

- Irregular workloads can be managed considerably more readily.

- Only the functionality connected to a specific microservice has to be validated, which simplifies testing.

2. API Exposure

Getting several services to interact, receive service requests, and provide data is difficult in microservices application designs. Additionally, the client-facing “front end” microservice must reply to user queries.

RESTful APIs may be used to manage communication in applications built using microservices. With a defined protocol, you may access an interface provided by these APIs. This makes it easy for callers from outside the network to know how to format a service request, whether they are calling from another service on the same LAN or one on the Internet.

The API is treated as a “contract” by each service. Despite the fact that APIs are uncomplicated, they require a number of different components to function as a reliable connectivity mechanism. These consist of the following:

- API versioning: By using this, you may provide a new version of the API that supports the updated format and behavior while maintaining the current API format and behavior for each microservice.

- Throttling: In this case, you keep track of API traffic calls and slow it down by rejecting calls during times of extreme demand.

- Circuit breakers: It measures the response time of microservices. When a service responds too slowly, the program as a whole is still able to run since the service returns standby data.

- Data caching: It supports circuit breakers in the case where the service’s data changes infrequently.

3. Operational Design

The expense of putting new code releases into production is one of the main obstacles to operations in conventional settings. New code releases need to deploy the complete program since monolithic architectures combine all of an application’s code into a single executable.

Microservices can make this situation a lot easier, but they can also be difficult for operations groups. This means that organizations must set up monitoring and management systems that can work with microservices applications.

The fact that an application often consists of many more processes presents an immediate difficulty. In a microservices-based design, the following monitoring and management components must be addressed:

- Dynamic application topologies.

- Centralized logging and monitoring.

- Root cause analysis

4. DevOps

Software development and IT coordination are the goals of the DevOps set of development methods. DevOps can help you construct CI/CD pipelines and improve the way you manage the development cycle. The purpose of DevOps is to shorten the period of time between when code is written and when it is deployed to production. DevOps process implementation is not simple. Most businesses begin in one of two ways:

- Identify and address a well-known application lifecycle pain point.

- Using the value chain mapping (VCM) approach, evaluate the entire application lifetime.

5. Testing

In order to prevent a panicked reaction or unplanned downtime of the application, quality assurance testing must be an integral component of the development process and carried out early in the process. There are three parts of testing that have often been overlooked but are becoming more important for applications are:

- Integration testing

- Client testing

- Performance/load testing

Basics Of Cloud-native Application Architecture

Cloud-native apps are designed to function optimally with the distributed nature of cloud computing and its ad hoc network of cloud services.

Developers of cloud-native applications must employ software-based architectures to connect computers, as not all services are hosted on the same server. Each service is hosted on its own server and operates independently. This setup allows programs to expand as needed horizontally.

However, cloud-native apps need to be built with redundancy since the infrastructure that supports them does not operate locally. This makes it possible for the program to continue working in the event of a hardware failure by automatically remapping IP addresses.

The Twelve-Factor application is a popular framework for developing cloud-based applications. It outlines a set of rules for how programmers should build cloud-ready apps. While Twelve-Factor is relevant to any web app, many developers use it specifically when creating cloud-native apps. These concepts allow systems to swiftly launch, expand, and add functionality in response to market shifts.

The Twelve-Factor Approach Is Highlighted In The Table Below:

| Code Base | Every microservice has its own repository with a single source code. Deployments are version-controlled and may be made in a variety of settings (QA, Staging, Production). |

| Dependencies | Each microservice is self-contained and adopts modifications independently of the others. |

| Configurations | The configuration data is exported from the microservice and stored in an external configuration management tool. With the right settings in place, the same deployment may be made in many settings. |

| Backing Services | A URL should be made available for ancillary resources such as data storage, caches, and message brokers. By separating the resource from the application, it becomes more flexible and easily replaceable. |

| Build, Release, Run | Separation between the release, build, and run phases is critical and must be strictly enforced with each release. Each one has to have its own ID and the ability to revert. This idea is facilitated by modern CI/CD systems. |

| Processes | Each microservice needs to function autonomously, in its own process. Externalize the necessary state to a supporting service, such a distributed cache or data store. |

| Port Binding | Each microservice has to be completely independent, having its own port for communicating with other services. Isolation from other microservices is achieved by doing so. |

| Concurrency | Instead of using one massive instance on the most powerful system, it is preferable to horizontally scale out services among several copies. Build your application in a concurrent manner that makes it easy to scale up on the cloud. |

| Disposability | Ideally, instances of a service would be disposable. Prioritize rapid startup to improve scalability and graceful termination to preserve integrity. As long as you use Docker containers and an orchestrator, you’ll be able to easily meet this criterion. |

| Dev/Prod Parity | Maintain a consistent set of environments during the lifetime of an application. The widespread use of containers, which encourage a consistent runtime, can be a huge help in this regard. |

| Logging | Logs from microservices should be handled like other event streams. Put them via an event aggregator for processing. Send records to a log management and data mining service, such as Azure Monitor or Splunk, and then to a repository where they may be preserved indefinitely. |

| Admin Processes | Execute one-time management and administrative activities, such as data cleansing or analytical computing. Invoke these processes from the production environment, but outside of the application, using dedicated tools. |

Best Practices For Cloud-native Application Development

The idea behind it is similar to that of the DevOps concept, which aims to improve business processes. There are no hard and fast rules when it comes to a cloud-native architecture. Companies will take various approaches to development depending on the nature of the business problem they are attempting to solve and the tools at their disposal.

Every step of the cloud-native application lifecycle, from concept to closure, must be planned for and accounted for in the design. These five components make up the whole design:

1. Automate

Using automation, cloud application environments may be consistently provisioned across many cloud service providers. Infrastructure as code (IaC) is used in automated settings to monitor modifications to a code base.

2. Monitor

It is important for teams to keep an eye on both the development environment and the application’s use. Everything from the underlying infrastructure to the application itself should be easily monitorable inside the environment and within the application itself.

3. Document

Several groups are working on cloud-native app development, but they don’t have much insight into each other’s work. As the application evolves, monitoring how each team contributes will be crucial. Therefore keeping detailed documentation is essential.

4. Incremental Changes

Application and underlying architecture modifications should be implemented in a way that is both gradual and reversible. Teams will be able to adapt to new circumstances and avoid making ingrained errors as a result. IaC allows programmers to monitor modifications made to a code base.

5. Design For Failure

It’s important to have procedures in place for when problems arise in the cloud. This necessitates the use of test frameworks that mimic failures so that lessons may be gleaned.

Features Of A Cloud-native Application

The cloud-native app architecture includes microservices deployed in containers and communicating with one another using APIs. Tools for orchestration are used to coordinate all of these parts. Some of the most important features of these programs include the following:

1. Microservices-based

When an application is divided into microservices, each service is treated as a separate entity. The individual services each make use of their own data and contribute to overarching organizational goals. Application program interfaces allow these modules to interact with one another (APIs).

2. Container-based

Containers are software that can logically separate a program so it can function independently of the hardware. Microservices are prevented from interfering with one another using containers. They prevent programs from using up all of the host computer’s resources at once. In addition, they make it possible to run many copies of a service simultaneously.

3. API-based

By bridging the gap between microservices and containers, APIs make management and protection much more manageable. They allow loosely linked services like microservices to interact with one another.

4. Dynamically Orchestrated

In order to handle the complexity of container lifecycles, orchestration solutions for containers are utilized. The provisioning and deployment of containers onto nodes in a server cluster, as well as the management of resources, load balancing, and restart scheduling in the event of an internal failure, are all handled by container orchestration tools.

Respondents indicated that re-architecting proprietary solutions into microservices were the most popular cloud-native use case within their organizations worldwide in 2022, with around 19 percent of respondents indicating as such. Deploying and testing applications was the second most popular use case among respondents.

source: Statista

Benefits Of Cloud-native Applications

Native cloud apps are built specifically to use the scalability and flexibility of cloud computing. Below are just a few of the many advantages of utilizing them:

1. Cost-effective

There is a scalable amount of computing and storage available. As a result, there is no longer any requirement for load balancing or additional hardware to handle peak usage. More virtual servers may be quickly deployed for testing purposes, and cloud-native apps can be launched with minimal effort. You may save time, resources, and money by using containers to operate as many microservices as possible on a single host.

2. Independently Scalable

Unlike monolithic applications, microservices may each be deployed, managed, and scaled individually. Altering one microservice for scalability will have no effect on the others. A cloud-native design allows for speedier updates to be rolled out to specific parts of a program if necessary.

3. Portability

In order to prevent vendor lock-in, cloud-native apps are vendor agnostic and make use of containers to transfer microservices from one vendor’s infrastructure to another.

4. Reliable

These cloud-based apps employ containers to ensure that the failure of a single microservice has no impact on other services.

5. Easy To Manage

Cloud-native apps use automation to roll out new features and upgrades. All changes made to microservices and other components are kept in a log and visible to developers. Because of the modular nature of microservices, a single engineering team may concentrate on developing a single service without worrying about how it will interact with other services in the application.

6. Visibility

As a result of the encapsulation that microservice design provides, it’s much simpler for development teams to analyze programs and figure out how they work together.

Tools For Cloud-native App Development

| Docker | With the help of a common operating system, it creates, deploys and manages virtualized application containers. It isolates resources to give the access of the same OS to multiple containers without contention. |

| Kubernetes | It manages and orchestrates Linux containers. It determines where and how the containers will operate. |

| Terraform | It is designed for the implementation of IaC. It applies version control and defines resources as code. |

| GitLab CI/CD | It is used for security and static analysis, unit testing and allows automated software testing and deployment. |

| Node.js | Helps in creating real-time applications like microservices. |

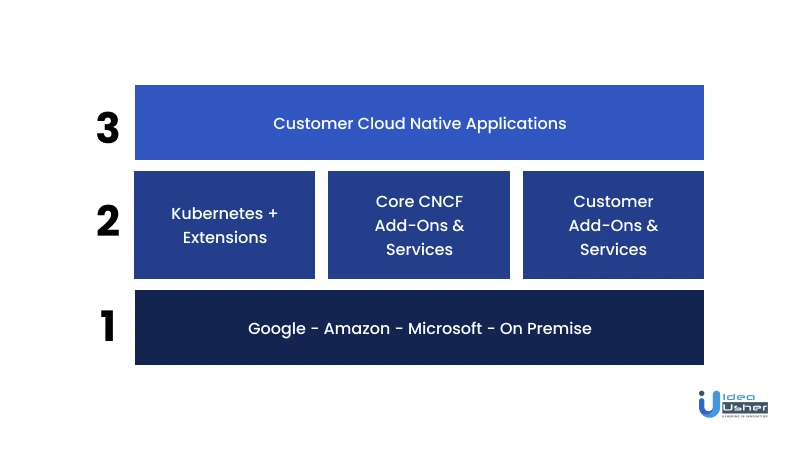

CNCF’s Role

Kubernetes, an open-source framework for automating deployments, scaling, and managing applications, is hosted by the vendor-neutral Cloud Native Computing Foundation (CNCF), founded in 2015. Amazon, Microsoft, Cisco, and over 300 other organizations contribute to Kubernetes, which Google designed to run its search engine.

Kubernetes organizes application containers into logical units for administration and discovery. It scales with your app without adding Ops resources.

It is also possible to securely do automated deployments, as well as many simultaneous deployments. For the vast majority of individuals, this brand-new notion of distributing and updating products is completely foreign. All of these ideas are part of the cloud-native revolution.

The CNCF’s major goal is to develop sustainable ecosystems and communities around a constellation of high-quality projects that enable and manage containers for Kubernetes-based cloud-native applications.

The CNCF hosts and supports new cloud-native projects, provides training and has a Technical Oversight Board, Governing Board, community infrastructure lab, and certification programs.

WrapUp!

Building software specifically for the cloud will be the way of the future. The Cloud Native Computing Foundation’s prediction that there would be at least 6.5 million cloud-native developers in 2020, up from 4.7 million in 2019, has turned out to be true lately. Cloud-native apps addresses some of the issues plaguing cloud computing. However, there are several obstacles to overcome when moving to the cloud with the goal of increasing productivity.

The future with cloud-native apps seems promising. So if you want to get along with this opportunity, get your cloud-native app developed by the experts at Idea Usher today!

Get in touch with us now!

Build Better Solutions With Idea Usher

Professionals

Projects

Contact Idea Usher at [email protected]

Or reach out at: (+1)732 962 4560, (+91)859 140 7140

FAQ

What is Cloud-native?

The phrase “cloud native” describes an approach to software development and deployment that makes use of the cloud’s decentralized computing infrastructure from the start. Apps developed specifically for the cloud are able to take full use of the cloud’s scalability, adaptability, reliability, and durability.

Is cloud-native same as microservices?

Since most definitions of cloud-native programs center on microservices architectures, microservices are inherently cloud-native. Cloud-native computing is a newer concept comparable to microservices systems.

What is one disadvantage of cloud-native application development?

The cloud-native ecosystem is intricate. Redesigning and migrating existing software to the cloud is not a simple task. In addition to having re-architectecture process for the cloud, companies need the necessary infrastructure to facilitate this change.

What is next after cloud-native?

Edge computing is the new next, in which the execution of tasks takes place locally at the edge when suitable and when sufficient data is available.