Mathematical reasoning systems differ from most AI applications because correctness matters as much as capability. Solving complex problems requires models that can follow logical steps, verify intermediate results, and maintain consistency across long chains of reasoning. These demands shape the design of an AI mathematical reasoning engine, where reliability and interpretability are as important as raw model performance.

Reliable mathematical reasoning requires more than just scaling a general-purpose language model; it also demandsstructured datasets, symbolic reasoning methods, verification layers, and specialized training pipelines to keep each step logically sound. Platforms such as Harmonic reflect this shift toward hybrid architectures that unite reasoning models, validation, and compute to deliver accurate, explainable outcomes.

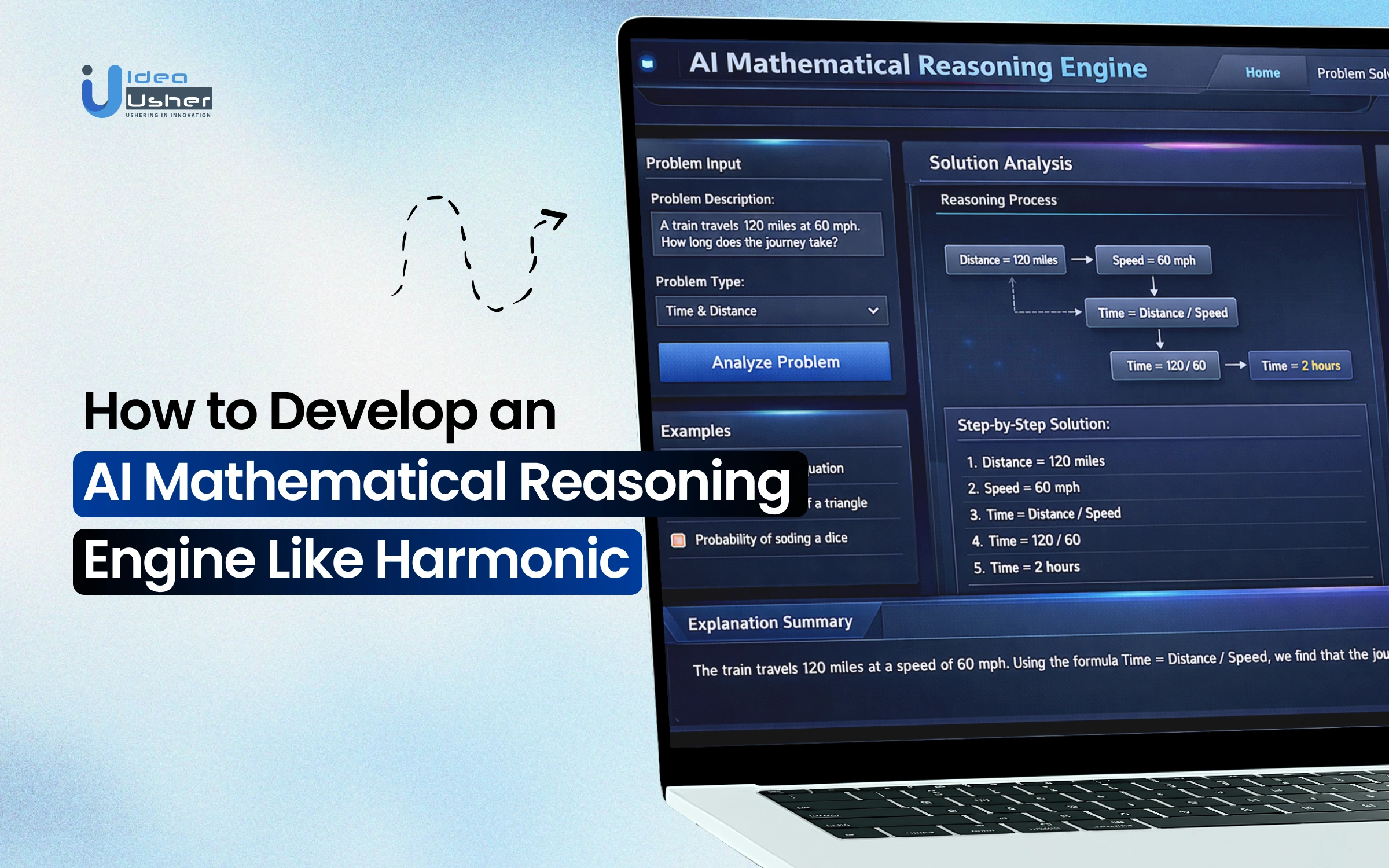

In this blog, we explain how to develop an AI mathematical reasoning engine like Harmonic by breaking down core system components, architectural considerations, and the technical approaches used to support advanced mathematical reasoning in AI systems.

What is an AI Mathematical Reasoning Engine, Harmonic?

Harmonic is an artificial intelligence research lab that develops a Mathematical Reasoning Engine designed to achieve “Mathematical Superintelligence” (MSI). Unlike general-purpose Large Language Models (LLMs) that rely on probabilistic predictions and often “hallucinate,” Harmonic’s engine uses formal logic to ensure 100% accuracy in its outputs.

Key Characteristics of Harmonic’s Engine

Harmonic’s engine introduces a new approach to AI reasoning by combining advanced language models with formal mathematical verification. Its Aristotle model ensures every solution is logically proven, reducing hallucinations and improving reliability.

- Aristotle Model: Harmonic’s flagship model, Aristotle, is the first publicly available AI that formally verifies its own reasoning.

- Lean4 Integration: The engine translates natural language problems into the Lean4 programming language, a formal proof assistant, to verify every step of a solution against mathematical axioms.

- Hallucination-Free: By requiring the system to output code rather than just English, Harmonic effectively eliminates logical errors and fabricated facts.

- Mathematical Superintelligence (MSI): The company describes its goal as MSI AI reasoning capabilities that match or exceed human performance in math-intensive fields.

- High Performance: In 2025, Aristotle achieved Gold Medal-level performance at the International Mathematical Olympiad (IMO) and reached a 96.8% success rate on the VERINA code verification benchmark.

Traditional LLMs vs. Harmonic Reasoning Engine

Harmonic is an AI mathematical reasoning engine that uses formal proof assistants for accuracy in quantitative tasks. Unlike typical models that predict likely words, Harmonic’s Aristotle engine builds solutions in a machine-checkable language for guaranteed correctness. Here is the comparison table:

| Feature | Traditional LLMs (e.g., GPT-4) | Reasoning Engines (e.g., Harmonic) |

| Logic Type | Probabilistic: Uses pattern matching to predict the next token based on training data. | Formal/Deductive: Uses strict mathematical axioms and logical rules. |

| Reliability | Heuristic: High risk of “hallucinations” or logical lapses in multi-step math. | Verifiable: Solutions are proven correct by a computer-coded proof checker. |

| Method | Chain-of-Thought: Generates natural language steps that look like reasoning. | Formal Proof Search: Utilizes systems like Lean 4 to build and verify steps. |

| Use Case | Generalist: Optimized for creative writing, coding assistance, and summaries. | Specialist: Designed for safety-critical engineering, finance, and “Math Superintelligence.” |

A. Business Model: “Truth-as-a-Service”

Harmonic positions itself as the “antidote to hallucinations” by providing a platform where every AI output is mechanically verified through the Lean4 proof assistant.

- Target Market Segments: Safety-critical industries including Aerospace, Automotive, Quantitative Finance, and Software Engineering.

- Core Value Proposition: Replacing “probabilistic guessing” with “formal verification,” ensuring 100% accuracy in mathematical and logical tasks.

- Strategic Partnerships: The company leverages partnerships to integrate its reasoning engine into enterprise verification standards.

- Product Offering: The flagship engine, Aristotle, which translates natural-language problems into formally verifiable proofs.

B. Revenue Model

While currently offering a free public API for researchers and mathematicians to build trust, Harmonic’s commercialization roadmap includes:

- Usage-Based API Pricing: Charging developers and enterprises based on the volume of reasoning tasks or proof verifications.

- Enterprise Pilots & Subscriptions: Direct engagement with large firms for specialized deployments in regulated environments.

- Premium Support: Providing expert-level assistance for implementing formal verification in industrial workflows.

- Government Contracts: Positioning “certified correctness” as a way to reduce procurement barriers for public sector safety projects.

Financial Details

Harmonic has quickly gained strong investor support, reaching unicorn status after its latest funding round. Backing from major venture firms reflects confidence in the company’s vision for advanced AI reasoning.

- Valuation & Funding: As of November 2025, the company reached Unicorn status with a valuation of $1.45 billion following a $120 million Series C round.

- Total Funding: ~$295 million from top-tier investors like Sequoia Capital, Kleiner Perkins, and Ribbit Capital.

- Founders: Co-founded by Robinhood CEO Vlad Tenev and CEO Tudor Achim.

Why the AI Mathematical Reasoning Engine is Growing?

The AI Reasoning Models market is growing rapidly, with a CAGR of 21.90%. Valued at $4.6 billion, it is projected to hit $22.8 billion by 2033, growing 23.40% annually. This surge highlights the growing demand for advanced systems, pushing companies to develop AI mathematical reasoning engines like Harmonic for solving complex problems at scale.

Harmonic’s Aristotle model achieved gold-medal-level performance at the 2025 International Mathematical Olympiad (IMO), solving 5 out of 6 complex problems with 100% formally verified solutions.

Recent benchmarks further demonstrate how quickly AI reasoning capabilities are advancing. In 2025, top-performing models achieved 94.6% on the American Invitational Mathematics Examination (AIME), a level widely considered superhuman compared to the median human participant.

Systems such as AlphaProof have even reached silver-medal performance at the International Mathematical Olympiad, successfully solving 4 out of 6 elite-level problems.

Meanwhile, in the GPQA Diamond benchmark, a graduate-level science test, leading reasoning models scored 93.2%, significantly surpassing the 70% human expert baseline, highlighting the rapid progress of AI in advanced analytical and scientific reasoning.

Real-World Applications of an AI Mathematical Reasoning Engine

The integration of formal logic with generative AI opens doors to fields where “mostly correct” is not good enough. By ensuring 100% verification, these engines move from simple calculators to sophisticated intellectual partners.

1. AI Tutors for Advanced Mathematics Learning

These engines act as personalized mentors that explain the “why” behind every step. They identify specific logical fallacies in a student’s work and offer interactive, Socratic guidance to bridge conceptual gaps.

Real-World Example: A university student struggling with Epsilon-Delta proofsin Real Analysis uses the engine to receive a step-by-step breakdown that flags exactly where their logical deduction fails.

2. Automated Theorem Proving for Research

Mathematicians use these engines to verify complex conjectures that are too dense for manual peer review. By translating informal proofs into formal code, the AI can find counterexamples or fill in “obvious” gaps in research papers.

Real-World Example: Researchers utilize the engine to formalize parts of the Liquid Tensor Experiment, verifying complex Peter Scholze theorems that previously required years of manual human audit.

3. Engineering and Scientific Problem Solving

Mathematical precision is a safety requirement in fields like aerospace and structural engineering. These engines automate the verification of stress-test models, ensuring that the underlying calculus adheres strictly to physical laws.

Real-World Example: An aerospace firm uses the engine to mathematically verify the control logic algorithms for autonomous drones, ensuring the flight equations remain stable under extreme turbulence.

4. Mathematical Assistants for Analysts

These engines simplify the implementation of complex algorithms for data scientists and software engineers. They generate optimized, bug-free code for cryptographic functions by verifying the logic against formal specifications.

Real-World Example: A blockchain developer uses the engine to verify the Zero-Knowledge Proof (zk-SNARK) arithmetic circuits in a smart contract to ensure no funds can be drained via a mathematical loophole.

Core Capabilities of an AI Mathematical Reasoning Engine

AI mathematical reasoning engines are designed to solve complex problems using formal logic and structured computation. They combine symbolic reasoning, proof validation, and language translation to deliver accurate, transparent mathematical solutions.

1. Symbolic Reasoning & Theorem Understanding

These engines process abstract structures rather than just numerical data. By manipulating variables and operators, they navigate complex axioms to verify conjectures and explore mathematical relationships, ensuring structural integrity across diverse theoretical frameworks.

2. Step-by-Step Proof Validation

The engine constructs a transparent logical chain rather than jumping to conclusions. Each inference is cross-referenced against established rules, allowing users to verify the “how” and “why” behind every derived mathematical truth.

3. Natural Language Autoformalization

This capability bridges the gap between human intuition and formal precision. It translates ambiguous word problems into rigorous notation, mapping linguistic intent to computable functions while maintaining the original problem’s underlying semantic constraints.

4. Automated Derivation & Computation

The system systematically applies algebraic transformations to isolate variables or simplify expressions. It identifies the most efficient computational path, performing calculus, linear algebra, or differential operations with speed and perfect algorithmic accuracy.

5. Interactive Research Collaboration

Acting as a collaborative partner, the engine provides real-time feedback and hints. Researchers can test hypotheses rapidly, while students gain deeper insights by exploring alternative solution paths in a dynamic, responsive environment.

Key Features of an AI Mathematical Reasoning Engine Like Harmonic

AI mathematical reasoning engines like Harmonic are designed to solve complex mathematical problems using advanced symbolic reasoning and machine learning techniques. They help automate theorem proving, problem solving, and mathematical discovery across scientific and engineering domains.

1. Formal Verification via Lean 4 Integration

The system translates informal mathematics into Lean 4, a functional programming language and theorem prover. This ensures every step is logically airtight, providing a rigorous computational foundation that guarantees absolute mathematical correctness and consistency.

2. Symbolic Mathematical Reasoning

Unlike standard LLMs that predict tokens, this engine manipulates abstract symbols and structures. It applies precise algebraic rules and logical identities, allowing it to solve complex variables without the errors common in purely statistical models.

3. Autoformalization of Natural Language

This feature converts messy, human-written word problems into machine-readable formal code. By bridging the gap between natural language and strict logic, it allows researchers to verify intuitive ideas using high-level computational tools.

4. Proof Generation and Validation

The engine breaks down complex conjectures into granular, logical “atoms.” Each intermediate step is individually validated against established axioms, creating a transparent audit trail that ensures the final conclusion is reached through sound deduction.

5. Socratic “Agentic” Problem Solving

Operating as a proactive agent, the AI asks clarifying questions and explores multiple pathways. This recursive reasoning mimics a human mathematician’s internal monologue, refining its strategy dynamically until it identifies the most elegant solution.

6. Automated Theorem Proving and Testing

The engine can autonomously search for proofs or generate counterexamples to test new hypotheses. By leveraging brute-force logic and heuristics, it accelerates mathematical discovery, helping researchers validate or debunk theoretical claims at scale.

7. Hallucination-Resistant Logical Reasoning

The system eliminates “plausible-sounding” but incorrect math by anchoring outputs to a formal kernel. It prioritizes logical validity over linguistic fluency, ensuring that every output is grounded in verifiable truth rather than statistical probability.

8. Interactive Problem Solving

Designed for collaboration, the engine provides real-time feedback and hints. It acts as a digital tutor or co-researcher, guiding users through difficult derivations while allowing them to steer the direction of the inquiry.

9. Advanced Calculus and Geometry Computation

The engine masters high-level domains, from multi-variable calculus to non-Euclidean geometry. It handles complex spatial and analytical tasks, performing exact calculations that exceed the capabilities of basic calculators or general-purpose artificial intelligence models.

10. Self-Checking Mathematical Inference Engine

The system employs a closed-loop verification process where it critiques its own logic. Before presenting an answer, it runs internal diagnostics to ensure the inference chain is unbroken, significantly increasing the reliability of results.

AI Mathematical Reasoning Engine Like Harmonic Development

Building a reasoning platform requires a shift from “creative generation” to “logical architecture.” Success depends on creating a seamless feedback loop between neural models and rigid symbolic solvers.

1. Consult & Define Mathematical Domains

Success starts with a narrow scope; a “universal” math AI often fails at specialized logic. Defining the domain dictates which symbolic libraries and formal languages are prioritized in the backend.

- Academic Research & Formal Methods: Focuses on integration with Lean 4 or Isabelle/HOL to assist in verifying novel proofs and complex conjectures.

- Quantitative Finance & Engineering: Prioritizes numerical stability, stochastic calculus, and integration with high-performance computing (HPC) environments.

- Educational Scaffolding: Emphasizes pedagogical accuracy, ensuring the engine can explain why a step was taken, not just provide the result.

2. Build Structured Mathematical Datasets

Standard web-scraped data is often “noisy” and lacks logical structure. High-quality reasoning requires datasets that mirror the deductive process of a human expert.

- Synthetic Data Generation: Using symbolic engines to reverse-engineer problems from known solutions, ensuring 100% ground-truth accuracy in the training set.

- Chain-of-Thought (CoT) Curation: Mining “rationales” from Peer-reviewed journals and LaTeX-heavy repositories (like arXiv) to teach the model the process of derivation.

- Negative Case Mapping: Intentionally including common mathematical pitfalls and “non-examples” to train the model on what not to do.

3. Train AI Models for Mathematical Reasoning Tasks

Generic LLMs are “System 1” thinkers that are fast and intuitive but error-prone. Training for math requires forcing the model into “System 2,” which is slow, deliberate, and logical.

- Supervised Fine-Tuning (SFT): Training on high-quality, step-by-step solutions to align the model’s linguistic output with formal logical structures.

- Process Reward Models (PRM): Developing a “Critic” model that scores every individual step in a proof. This prevents “reward hacking,” where a model gets the right answer through a flawed logical path.

- Reinforcement Learning from Logic (RLL): Using a formal verifier as the reward signal. If the code compiles and the proof is verified, the model is rewarded; if it fails, it is penalized.

4. Develop Symbolic Reasoning Modules

The symbolic engine is the “hard-coded” logic center that handles the heavy lifting of exact calculations where neural networks traditionally fail.

- Computational Algebra Systems (CAS): Integrating engines like SymPy or WolframEngine to handle exact symbolic manipulation (e.g., factoring large polynomials or solving ODEs).

- Abstract Syntax Trees (AST): Converting math expressions into tree-based structures to perform transformations without “hallucinating” numbers or variables.

- Constraint Solvers: Implementing SAT/SMT solvers (like Z3) to handle logical satisfiability and check for contradictions in user-provided assumptions.

5. Integrate LLM Reasoning with Symbolic Engines

This is the “Orchestration Layer” where the system balances creative hypothesis generation with rigid computational execution. Success depends on the AI’s ability to switch between “System 1” (intuitive drafting) and “System 2” (logical verification) dynamically.

- Neuro-Symbolic Orchestrator: Routes sub-tasks needing exact calculation to the symbolic backend instead of guessing results.

- Code-as-Reasoning: Translates logical steps into executable scripts (Python, Wolfram, Lean), so complex operations use specialized algorithms instead of probabilistic prediction.

- Verification Feedback Loop: The symbolic engine returns results or errors. If code fails, the LLM self-debug by analyzing traces, correcting assumptions, and retrying.

- Contextual Re-Injection: Verified symbolic output is integrated into natural language explanations, making proofs cohesive and grounded in deterministic truth.

6. Build APIs and Developer Interfaces

The interface must bridge the gap between abstract math and usable software, providing high-fidelity rendering and structured data.

- LaTeX/MathML Engine: Essential for rendering complex notations; must support dynamic updates as the “Chain of Thought” unfolds.

- Structured Reasoning Traces: Providing the API response as a JSON object containing the final answer, the intermediate steps, and the “Verification Status” for each step.

- Formalization-as-a-Service: Allowing developers to send English text and receive back valid Lean 4 or Python code representing the problem.

7. Test Accuracy with Benchmark Datasets

Standard NLP metrics like BLEU or ROUGE are useless here. Testing must be based on “Functional Correctness” and “Logical Soundness.”

- Hard-Math Benchmarks: Testing against MATH, GSM8K, and TheoremQA to evaluate performance against human competitive standards.

- Adversarial Perturbation: Slightly modifying numbers or constants in a problem to ensure the model is actually reasoning rather than memorizing a training example.

- Verification Rate: Measuring the percentage of AI-generated proofs that successfully pass through a formal compiler (like Lean).

8. Launch and Improve Reasoning Performance

The final stage is an iterative “Flywheel” where real-world failures become the training data for the next version.

- Active Learning Loops: Capturing “Edge Case” failures from users and using them to fine-tune the symbolic and neural layers.

- Performance Telemetry: Monitoring the “Logical Depth” of successful proofs to identify where the engine struggles (e.g., handles Algebra well but fails at Topology).

- Continuous Integration of Theorems: Regularly updating the internal “knowledge base” with new lemmas and axioms to expand the engine’s problem-solving horizon.

Estimated Cost Breakdown for AI Reasoning Platform Development

The following table outlines the estimated investment required to build an enterprise-grade mathematical reasoning platform. These figures reflect the specialized nature of neuro-symbolic engineering and formal verification expertise.

| Development Phase | What We Deliver | Estimated Cost Range |

| Define Math Domains & Users | Define mathematical scope, user personas, platform goals, and technical requirements for reasoning engine | $5,000 – $10,000 |

| Build Structured Math Datasets | Curate structured math datasets, chain-of-thought examples, and synthetic reasoning data for model training | $15,000 – $35,000 |

| Train AI Reasoning Models | Fine-tune AI models on mathematical reasoning tasks using SFT, PRM evaluation, and logic feedback | $30,000 – $70,000 |

| Develop Symbolic Reasoning Modules | Build symbolic computation layer using CAS tools, constraint solvers, and structured math expression processing | $20,000 – $50,000 |

| Integrate LLM With Symbolic Engines | Develop neuro-symbolic orchestration layer enabling LLM reasoning with symbolic verification and computation | $25,000 – $60,000 |

| Build APIs & Developer Interfaces | Create APIs, reasoning endpoints, LaTeX rendering, structured outputs, and developer integration tools | $15,000 – $35,000 |

| Test With Benchmark Datasets | Validate reasoning accuracy using math benchmarks, adversarial tests, and formal proof verification pipelines | $10,000 – $25,000 |

| Launch MVP & Optimize Performance | Deploy MVP platform, implement monitoring, active learning loops, and continuous reasoning model improvements | $20,000 – $45,000 |

Estimated Cost: $70,000 – $180,000+

Note: Actual AI mathematical reasoning engine development cost may vary based on AI model complexity, datasets, infrastructure requirements, integrations, and engineering expertise.

Consult with IdeaUsher and our expert developers to understand the core working process, clarify your business objectives, and plan the development accordingly for your AI mathematical reasoning platform.

Key Financial Considerations

Building an AI mathematical reasoning engine requires substantial financial and technical investment, mainly due to high compute needs, specialized expertise, and scalable infrastructure for deployment.

- Compute Costs: The “Model Training” phase is highly dependent on GPU availability and the size of the base model (e.g., Llama 3 70B vs. specialized smaller models).

- Expertise: Building symbolic modules and Lean 4 integrations requires PhD-level expertise in computational mathematics, which is reflected in the development costs.

- Scalability: Costs for APIs and MVP launch include the initial infrastructure setup for high-concurrency mathematical processing.

Technology Stack for Building a Harmonic-Like Engine

Building a mathematical reasoning engine requires a hybrid architecture that blends the linguistic fluency of deep learning with the absolute precision of formal logic.

| Component | Role in Mathematical Reasoning | Recommended Technologies |

| Large Language Models (LLMs) | Handles natural language understanding, informal-to-formal translation (Autoformalization), and high-level strategy/planning. | GPT-4o, Claude 3.5 Sonnet, or specialized models like DeepSeek-Math or Llama-3-70B fine-tuned on LaTeX/Lean. |

| Symbolic Computation & Provers | Acts as the “truth kernel.” Verifies logical steps, solves algebraic equations, and prevents hallucinations. | Lean 4 (Interactive Theorem Prover), Z3 (SMT Solver), SymPy (Python symbolic math), or WolframEngine. |

| Vector Databases | Stores and retrieves high-dimensional embeddings of mathematical formulas, papers, and existing proof libraries. | Pinecone, Milvus, or Weaviate using specialized encoders like MathBERT for semantic formula matching. |

| Knowledge Graphs | Maps the relationships between mathematical entities (e.g., Is-A, Proves, Equivalent-To), enabling multi-hop logical traversal. | Neo4j or Amazon Neptune to structure Mathlib (Lean’s library) into a navigable graph |

| HPC Infrastructure | Provides the raw compute for intensive proof searches, reinforcement learning (RL), and model inference at scale. | NVIDIA H100/A100 clusters, CUDA for parallelized proof search, and Kubernetes for GPU orchestration. |

Key Integration Points

- The LLM-Prover Loop: The LLM generates a candidate proof step in a formal language like Lean 4, which the Prover then checks. If the Prover returns an error, the LLM uses that feedback to self-correct.

- Graph-Augmented RAG: When faced with a complex conjecture, the system queries the Knowledge Graph to find related theorems, using the Vector Database to pull in similar proof patterns from unstructured research papers.

Key Challenges in Building Mathematical Reasoning AI

Building mathematical reasoning AI involves challenges such as handling complex symbolic logic, ensuring accuracy, and scaling problem-solving capabilities. Our developers address these with advanced algorithms, robust validation systems, and optimized AI architectures.

1. Ensuring logical correctness in generated proofs

Challenge: LLMs often produce “hallucinated” proofs that appear linguistically fluent but contain subtle, catastrophic logical gaps or incorrect axiomatic applications.

Solution: Our developers integrate Lean 4 or Coq as a formal verification kernel. Every LLM-generated step is passed through these provers to ensure 100% mathematical validity before final output.

2. Handling long-chain mathematical reasoning

Challenge: Models frequently lose the “logical thread” in multi-step problems, leading to error accumulation where a single early mistake derails the entire solution.

Solution: We implement Chain-of-Thought (CoT) reasoning combined with Search-tree algorithms (MCTS). This allows the engine to explore multiple reasoning paths and backtrack when a specific logical branch reaches a dead end.

3. Training models on high-quality math datasets

Challenge: Standard web-crawl data is riddled with poorly formatted math, inconsistent notation, and incorrect solutions, which degrades the model’s specialized reasoning capabilities.

Solution: We curate high-signal datasets from arXiv, Mathlib, and StackExchange, using specialized LaTeX parsers. We also utilize synthetic data generation to create millions of verified, step-by-step problem-solution pairs.

4. Reducing hallucinations in mathematical outputs

Challenge: AI models prioritize “plausible” token prediction over factual accuracy, often inventing mathematical identities or constants that do not actually exist.

Solution: Our team builds a Retrieval-Augmented Generation (RAG) pipeline connected to a verified Mathematical Knowledge Graph. This forces the model to ground its reasoning in established theorems rather than statistical guesswork.

Future of AI Mathematical Reasoning Systems

The next frontier for AI involves moving beyond calculation to true conceptual innovation. By 2026, mathematical reasoning systems are evolving into “Co-Mathematicians” that don’t just solve problems but propose new axioms and frameworks that will define the next century of science.

1. AI Solving Complex Research-Level Mathematics

Future engines will address long-standing conjectures by exploring vast logical possibilities. These systems go beyond basic arithmetic to manage high-level abstraction, linking fields like topology and number theory.

Real-World Example: AlphaGeometry (by Google DeepMind) recently solved complex International Mathematical Olympiad (IMO) geometry problems at a gold-medal level, proving it can perform high-level deduction that previously required intense human intuition.

2. Integration with Scientific Discovery Platforms

Mathematical reasoning is the “logical spine” of science, ensuring simulations respect fundamental laws. Integrating theorem provers into the discovery loop allows AI to confirm a new material’s stability mathematically.

Real-World Example: Researchers at the California Institute of Technology (Caltech) use AI-integrated symbolic engines to verify the stability of Neural Landers, ensuring autonomous spacecraft land safely by proving their control mathematics are error-free.

3. Autonomous Reasoning Systems

These systems are becoming autonomous agents that handle the full R&D cycle from raw data observation to physics validation. They offer fast, verifiable solutions for engineering, cryptography, and structural optimization.

Real-World Example: Lean’s Mathlib project is currently being used to formalize the Liquid Tensor Experiment, where AI assistants help mathematicians verify “impossible to check” proofs in condensed mathematics, essentially acting as a tireless research partner.

Conclusion

A robust AI mathematical reasoning engine balances neural flexibility and symbolic precision. Prioritizing verifiable logic over pattern recognition enables systems to move beyond token prediction and truly understand underlying truths. As autonomous scientific tools advance, transparency and rigorous validation remain essential. Mastering this architecture unlocks “System 2” thinking in AI, transforming simple calculators into sophisticated partners for global innovation.

Why Choose IdeaUsher for AI Mathematical Reasoning Engine Development?

Creating an AI mathematical reasoning engine like Harmonic demands mastery of symbolic logic, formal verification systems, and neuro-symbolic architectures, not just next-token prediction.

We build AI-driven products across industries, specializing in performance systems, model integration, and scalable infrastructure. Our expertise helps us develop mathematical reasoning engines that balance step-by-step logical accuracy, computational efficiency, and rigorous proof validation.

Our ex-FAANG and MAANG engineers bring over 500,000+ hours of hands-on AI development experience, allowing us to architect reasoning platforms aligned with mathematical rigor, theorem-proving workflows, and research-grade performance benchmarks.

Why Hire Us:

- Neuro-Symbolic Expertise: We build hybrid AI systems combining neural networks and symbolic reasoning engines, deploy formal verification, and ensure mathematical consistency in complex, multi-step proofs.

- Custom Reasoning Architecture: We fine-tune models on mathematical corpora and build proprietary verification layers for platforms with strong logical coherence and an edge over standard LLM-based solutions.

- Full-Cycle Ownership: We manage infrastructure selection, integrate with formal proof assistants like Lean or Coq, and build scalable reasoning architectures so your mathematical engine is advanced and ready for launch.

Work with Ex-MAANG developers to build next-gen apps schedule your consultation now

FAQs

A.1. A neuro-symbolic approach constrains the neural network with a formal verification system to eliminate hallucinations. The engine checks every step against a symbolic solver like Lean or Coq to guarantee all generated proofs are mathematically sound.

A.2. Development uses structured LaTeX documents, formal proofs from repositories like the Archive of Formal Mathematics, and synthetic datasets. This diverse data diet teaches the model both human-like problem-solving intuition and formal logical structures.

A.3. Measure success with rigorous benchmarks like GSM8K for grade-school word problems and MATH for competitive-level challenges. Testing against formal proof sets ensures the engine handles complex undergraduate and graduate-level mathematical derivations without logic gaps.

A.4. The primary challenge is to combine the intuitive pattern recognition of neural networks with the rigid rules of symbolic logic. A robust interface lets the language model propose solutions, which a symbolic kernel then verifies for accuracy.