Research firms operate in environments where accuracy and evidence must guide every decision. Analysts must carefully review technical papers, industry reports, and internal datasets before forming conclusions. This process can become increasingly difficult as the volume of information continues to expand. Many research firms are now using domain-specific reasoning AI because it can understand specialized terminology and evaluate evidence carefully.

These systems may follow structured reasoning while comparing findings across many sources. Researchers can therefore interpret results more confidently and reduce the time spent on manual analysis. Over time, such systems could significantly improve how research firms manage large-scale knowledge analysis.

Over the years, we have developed several reasoning AI systems for research analysis and knowledge discovery, powered by knowledge graph engineering and semantic reasoning AI frameworks. Since IdeaUsher has this expertise, we are sharing this blog to discuss the steps for developing a domain-specific reasoning AI for research firms.

Why Research Firms Are Investing in Reasoning AI?

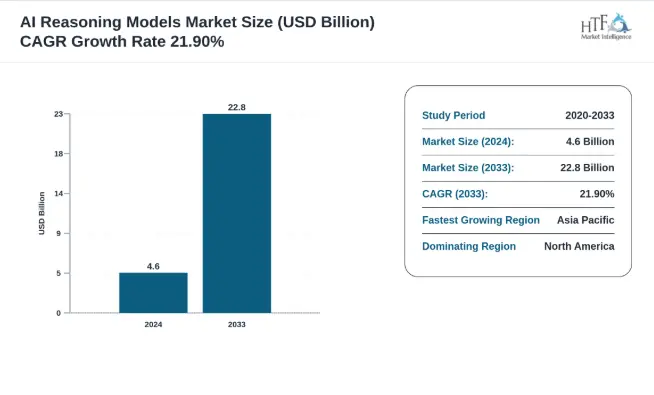

According to HTFmarketinsights, the AI Reasoning Models market is experiencing robust growth, projected to achieve a compound annual growth rate CAGR of 21.90% during the forecast period. Valued at 4.6 billion, the market is expected to reach 22.8 billion by 2033, with a year-on-year growth rate of 23.40%. This trajectory signals a move toward System 2 architectures capable of deliberate, multi-step logic.

Source: HTF market insights

Why Research Firms Are Investing in Reasoning AI

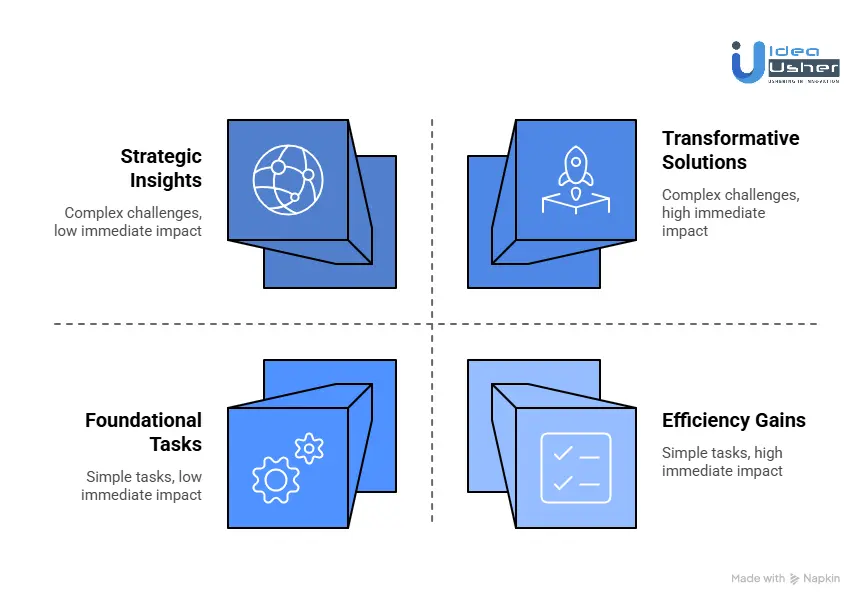

Research firms are pivoting toward reasoning AI to solve the synthesis bottleneck. While standard AI excels at data retrieval, it often lacks the logical validation needed for high-stakes decisions. Strategic leaders at PwC and McKinsey are shifting from pilots to AI Studios that prioritize automated, end-to-end workflows.

These firms are investing in models using Reinforcement Learning to simulate multiple logical paths. This shift toward industrialized autonomy allows senior partners to focus on strategy while digital analysts handle foundational logic. High-performing organizations now allocate over 20% of digital budgets to these specific reasoning capabilities.

Limits of Generic LLMs in Professional Research

The initial era of generic LLMs revealed a hallucination floor that is unacceptable in professional settings. These models are probabilistic, predicting the next likely word rather than understanding causal chains. In legal or financial sectors, a statistically probable answer that lacks factual rigor is a major liability.

Generic models also struggle with long-horizon planning. They often lose the logical thread during complex tasks, leading to internal contradictions. Without an explicit reasoning layer, these models identify surface correlations but fail to provide the deep causal insights required for professional-grade market analysis.

Demand for Domain-Trained Reasoning Models

The market is shifting toward Domain-Specific Language Models that favor depth over breadth. Gartner suggests that over half of enterprise GenAI models will be domain-specific by 2028. Firms now demand models trained on specific data like SEC filings or clinical trials to ensure accuracy.

These models use PRMs to grade intermediate reasoning steps. This approach is vital in scenarios where data is sparse but logical rules are clear. Firms like Amundi use these models to navigate market regimes, ensuring reports are logically sound within their specific industry framework.

Industries Driving Reasoning AI Adoption

Three sectors are acting as the primary engines for reasoning AI:

- Financial Services: Firms use reasoning AI for asset allocation. These models analyze historical precedents to quantify risk while providing an auditable chain of thought. Tools like OpenAI’s Deep Research are increasingly used for multi-source verification.

- Life Sciences: In R&D, reasoning AI narrows the search space for drug discovery. Systems like Google’s AI Co-Scientist generate and review research proposals, predicting molecular interactions with PhD-level accuracy to reduce time in the lab.

- Legal and Compliance: The industry is adopting adversarial audits. Reasoning models simulate regulatory scrutiny to find contract inconsistencies. This shifts junior staff’s role toward logic auditing, using agentic workflows to execute tasks autonomously.

What Is a Domain-Specific Reasoning AI System?

A domain-specific reasoning AI system is a specialized architecture built for the logical and technical rigors of a particular field. Unlike general AI designed for broad conversation, these systems are engineered to master the causal relationships and regulatory constraints of industries like macroeconomics or clinical pharmacology.

Research firms utilize these systems to move beyond pattern recognition. By incorporating structured reasoning layers, models validate internal logic against industry-standard axioms. This ensures the output is not just linguistically coherent but structurally sound within a professional context.

Difference between reasoning AI and standard LLM tools

The distinction lies in System 1 versus System 2 processing. Standard LLM tools operate on System 1: fast, probabilistic pattern matching. They predict the likely next word based on vast datasets, which often leads to hallucinations when the model prioritizes fluency over factual accuracy.

Reasoning AI integrates System 2 capabilities for deliberate, multi-step computation. These models use Chain of Thought processing to explore multiple hypotheses before delivering an answer.

While a standard LLM provides the most probable answer, reasoning AI provides the most logical one through a verifiable sequence.

How Domain Context Improves Analytical Accuracy

Domain context acts as a logical filter, narrowing the probability space. In a generic model, “yield” could refer to agriculture or chemistry. In a domain-specific model for fixed-income research, it is immediately contextualized within bond mathematics and interest-rate environments.

Training on industry-specific datasets allows these models to internalize nuances that generic models miss. This specialized focus reduces “drift” and ensures the model adheres to specific evidentiary standards. The result is a significant reduction in error rates as the model identifies variables that are mathematically or legally impossible.

Key Capabilities Research Firms Expect

Modern research firms demand high-level functionalities to justify the investment:

- Self-Correction: The ability for the model to identify logical fallacies and backtrack to a more accurate path.

- Multi-Source Synthesis: Capacity to cross-reference unstructured news with structured financial data to find hidden correlations.

- Adversarial Simulation: The model must “stress test” hypotheses by arguing against its own conclusions to provide a balanced risk view.

- Traceability: A transparent audit trail showing exactly which data points and logical steps led to a specific recommendation.

Real-World Use Cases of Reasoning AI for Research Firms

Reasoning AI has moved from theoretical potential to practical application. Leading research firms are already using these systems to navigate complex data landscapes and provide higher accuracy to their clients.

1. Market Intelligence Automation

Firms use reasoning systems to identify significant market shifts by monitoring thousands of disparate sources. Unlike simple alerts, these AI agents categorize changes as trivial or significant based on strategic context.

- AlphaSense: This intelligence platform allows users to query millions of unstructured documents to find non-obvious trends and risks.

- Moglix: By deploying AI for vendor discovery and sourcing, Moglix achieved a 4x improvement in team efficiency, increasing quarterly business from INR 12 crore to INR 50 crore.

2. Investment Research Analysis

Institutional giants leverage reasoning AI to move beyond basic sentiment scores toward predictive modeling. These systems analyze nuances in corporate language that traditional tools miss.

- BlackRock: The firm uses specialized LLMs in its systematic investment process to forecast market reactions after earnings calls with higher accuracy than general-purpose models.

- JPMorgan: Their COIN system handles complex document reviews that previously consumed 360,000 hours of manual labor annually.

3. Scientific Literature Review

In R&D, reasoning AI accelerates the discovery of causal links in vast scientific databases. It simulates how different variables interact, cutting years off traditional experimental timelines.

- DeepMind: AlphaFold has predicted over 200 million protein structures, a feat that would have taken hundreds of millions of years of manual lab work.

- Moderna: The company used AI algorithms to predict the optimal synthetic sequences for mRNA development, contributing to the record speed of its vaccine rollout.

4. Policy and Regulatory Insights

Regulatory research requires AI capable of understanding legal hierarchies and identifying gaps across global jurisdictions. Reasoning agents ensure compliance by auditing policies against evolving laws.

- Deloitte: Their Regulatory Intelligence Suite uses reasoning AI to accelerate understanding of complex documents by 50% and automate the extraction of specific compliance requirements.

- Thomson Reuters: The firm has streamlined AI tools for lawyers and compliance pros to process and summarize legislative changes in real time.

5. Competitive Intelligence Generation

Firms use reasoning AI to build 360-degree views of rivals by connecting weak signals from job postings, patent filings, and supply chain shifts.

- Visualping: Uses AI to monitor changes on competitor websites and summarize the strategic impact of product launches or pricing adjustments.

- Klue: This platform enables enterprise clients to build “battlecards” for sales teams by synthesizing competitor data into actionable talking points.

Key Components of a Research Reasoning AI Architecture

Building an AI that reasons requires more than a large language model. It demands a specialized architecture where neural patterns meet symbolic logic. At IdeaUsher, we build these systems using a multi-layer approach to ensure every insight is grounded in fact and enterprise security.

1. Domain-trained Models

We begin with high-capacity models like Gemini 1.5 Pro or OpenAI o1. Our ex-MAANG engineers apply supervised fine-tuning and Process Reward Modeling to align these models with your research standards. This ensures the AI understands technical jargon and the nuances of professional evidence.

2. Knowledge Graph Layers

A reasoning AI cannot rely on text alone. We integrate knowledge graphs to map relationships between entities like companies, patents, and regulatory bodies. This structured layer acts as a source of truth that allows the AI to perform multi-hop reasoning without losing its logical footing.

3. Retrieval-Augmented Pipelines

Standard RAG finds documents while our reasoning pipelines evaluate them. We build agentic workflows that plan search strategies, identify contradictions across sources, and iteratively refine findings. This results in an AI that actively investigates your research questions rather than just answering them.

4. Fact Validation Engines

In research, a claim is only as good as its source. We engineer automated verification layers that link every conclusion back to specific paragraphs or tables. This creates a transparent audit trail that allows analysts to instantly verify the raw data behind any AI-generated insight.

5. Secure Data Connectors

Security is the foundation of our architecture. We develop custom connectors that securely bridge your AI to internal silos such as SQL databases and document lakes. These connectors utilize bank-grade encryption and granular access controls to ensure your sensitive IP remains protected at all times.

How To Build a Domain-Specific Reasoning AI for Research Firms?

Building a professional-grade reasoning AI system requires a rigorous, multi-layer approach that moves far beyond basic prompt engineering. Our methodology ensures that every logical leap the AI takes is grounded in your firm’s unique expertise and evidentiary standards.

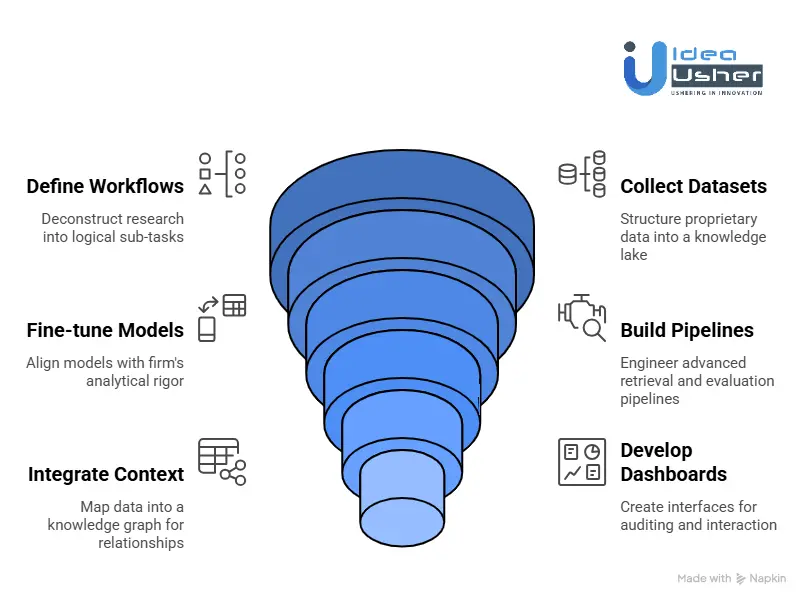

1. Define Workflows

We begin by deconstructing your existing research methodologies into granular, logical sub-tasks. By mapping the exact decision trees your experts follow, we identify where reasoning AI can most effectively bridge the gap between simple data retrieval and complex strategic synthesis.

2. Collect Datasets

We curate and structure your proprietary data from internal memos to specialized industry journals into a high-fidelity “knowledge lake.” This process ensures the AI internalizes the specific vocabulary, taxonomies, and causal relationships unique to your business sector.

3. Fine-tune Models

Our team selects the most robust base models and applies supervised fine-tuning to align their internal “chain of thought” with your firm’s analytical rigor. This step moves the model from general-purpose fluency to specialized, high-accuracy reasoning.

4. Build Pipelines

We engineer advanced retrieval pipelines that do not just “fetch” information but evaluate it for contradictions and relevance. These pipelines allow the system to plan its search strategy iteratively, cross-referencing multiple sources to build a solid evidentiary foundation.

5. Integrate Context

By mapping your data into a knowledge graph, we provide the AI with a “ground truth” of relationships and hierarchies. This allows the system to identify non-obvious links such as how a specific regulatory shift might impact a supply chain, adding a layer of symbolic logic.

6. Develop Dashboards

We design collaborative interfaces that visualize the AI’s logical path, allowing your team to audit the “chain of thought” behind every conclusion. These dashboards include interactive source-linking and “what-if” toggles, ensuring the human remains the final arbiter of strategy.

7. Test Accuracy

Before deployment, we subject the system to rigorous adversarial “red teaming” and Process Reward Modeling to verify step-by-step logic. This exhaustive testing phase mitigates hallucinations and ensures the system operates within strict ethical and regulatory boundaries.

Cost to Develop a Domain-Specific Reasoning AI for Research Firms

Developing a reasoning-centric AI is an investment in cognitive infrastructure. While basic wrappers are inexpensive, a true domain-specific system requires a capital commitment reflecting its complexity. For research firms, the goal is a System 2 engine that prioritizes logical precision over conversational flair.

Model Development and Fine-Tuning Costs

The system’s intelligence relies on balancing high-end foundation models with specialized fine-tuning. Most firms refine a powerful base, such as an OpenAI o1 or Gemini Pro, using proprietary datasets to mirror their internal analytical rigor.

- Initial Fine-Tuning: $150,000 to $350,000 for engineering talent and computing power.

- Specialized Training (LoRA): $12,000 to $50,000 per iteration for efficient model updates.

- Validation: $50,000 to $100,000 for analysts to grade intermediate reasoning steps.

Data Engineering and Infrastructure Expenses

Reasoning AI is only as effective as its grounding evidence. Building the pipelines to feed the model is labor-intensive, often consuming 40% to 60% of the total development budget.

Infrastructure Breakdown:

- Vector Databases: $15,000 to $80,000 annually for high-performance retrieval.

- Data Engineering: $75,000 to $300,000 to process unstructured reports into AI-ready formats.

- Compute (Inference): $5,000 to $50,000 monthly based on research volume.

Integration and Enterprise Deployment Costs

To be effective, the AI must integrate with existing CRM and document management systems. This last mile of deployment ensures the tool is embedded in the analyst’s daily workflow.

- System Integration: $30,000 to $150,000, depending on the age of your tech stack.

- Governance: $25,000 to $100,000 for explainability layers and bias testing.

- Custom Dashboards: $10,000 to $60,000 for interfaces that visualize the AI’s logic.

Estimated Development Timeline and Budget

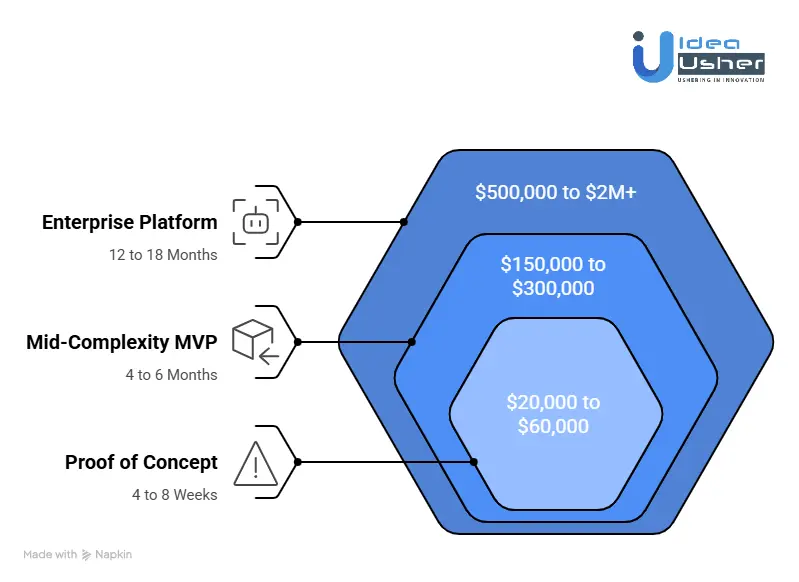

Total investment scales with the depth of the reasoning required. The following table outlines what firms should expect for a production-ready system.

| Project Scope | Estimated Budget | Timeline | Key Deliverable |

| Proof of Concept | $20,000 to $60,000 | 4 to 8 Weeks | Limited feasibility test |

| Mid-Complexity MVP | $150,000 to $300,000 | 4 to 6 Months | Functional reasoning for core cases |

| Enterprise Platform | $500,000 to $2M+ | 12 to 18 Months | Full multi-agent research engine |

Choosing the Right AI Models for Domain-Specific Reasoning AI

Choosing the right model architecture is the difference between an AI that “chats” and an AI that “conducts research.” For a reasoning system to be effective, it must integrate diverse models that handle distinct cognitive tasks.

Analytical Reasoning

For high-level synthesis and multi-step deduction, you need models designed for Chain of Thought processing. These serve as the central executive of your research system.

- Top Tier Choices: DeepSeek-R1 and Gemini 1.5 Pro. These models are benchmarks for complex logic and long-context understanding.

- The Specialist: GLM-5 or MiniMax-M2.5. These are powerful alternatives that excel in agentic workloads and complex systems engineering.

- The Reasoning Layer: We implement Process Reward Models (PRM) to grade internal steps. This ensures the AI proves its work rather than just jumping to a conclusion.

Document Search

A reasoning AI is only as smart as the data it can find. Traditional keyword search is insufficient; you need Retrieval-Augmented Generation (RAG) powered by dense vector embeddings.

| Model Category | Recommended Engine | Why it Matters |

| Embedding Model | Qwen3-Embedding | Captures the semantic meaning of research papers. |

| Vector Database | Pinecone or Milvus | Scales your library to millions of documents with low latency. |

| Reranker | BGE-Reranker | Audits initial search results to ensure only the top data reaches the LLM. |

Knowledge Reasoning

To find non-obvious correlations, you need a Knowledge Graph. This allows the AI to traverse complex networks of information invisible to standard text search.

The Hybrid Approach:

We build GraphRAG systems. While an LLM reads the text, a graph model like Neo4j maps entities and relationships. This structured context reduces hallucinations and provides clear provenance for every insight generated.

Domain Fine-Tuning Strategies

Base models are generalists. To make them specialists, we use targeted fine-tuning strategies that do not require re-training from scratch.

- PEFT: We use LoRA to inject industry-specific vocabulary into the model. This is fast and preserves the model’s core logic.

- Supervised Fine-Tuning: Our ex-MAANG engineers train the model on examples of your house style and specific research methodologies.

- Context Engineering: We prioritize Long-Context Windows up to 1M+ tokens. This allows the AI to read entire libraries in a single session, keeping context fresh without model drift.

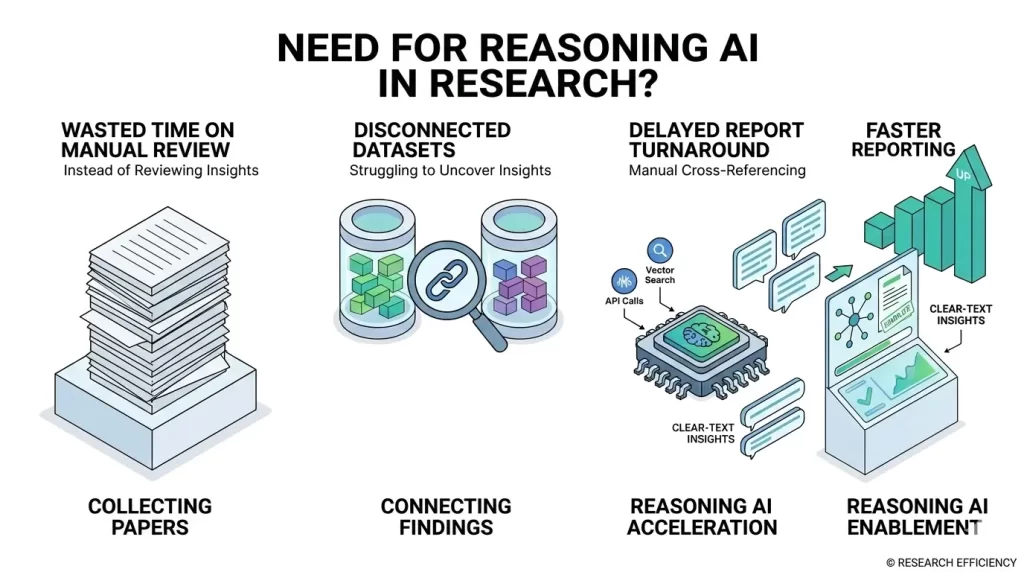

Signs Your Research Firm Needs a Reasoning AI System

The shift toward AI-augmented intelligence is often signaled by operational friction. When senior analysts spend more time processing information than on strategic synthesis, the cost of inaction rises. Identifying these bottleneck patterns is essential to maintaining a competitive edge.

Manual Paper Review

If your team is drowning in a sea of white papers, you face an indexing problem that manual labor cannot solve. When professionals spend their day identifying relevant passages rather than interpreting them, the firm loses intellectual equity.

- The Symptom: Analysts report information fatigue and struggle to keep up with the daily volume of scientific or financial publications.

- The Reasoning Gap: Standard tools find keywords; they do not find arguments. A reasoning system reads 1,000 papers to identify only those that use specific methodologies or raise contradictory hypotheses.

- The Impact: Reduced billable hours and higher turnover as talent burns out on repetitive data extraction.

Synthesis Difficulties

Synthesis is the ability to connect a subtle regulatory shift to a potential supply chain disruption. If your reports are factual but lack connective tissue, your data is likely siloed.

Internal Audit Checklist:

- Do reports miss correlations hidden across multiple documents?

- Is there a lack of consistency in how different analysts interpret the same dataset?

- Do you rely on manual Excel cross-referencing to link text with quantitative data?

A yes to these points suggests your firm requires a system capable of multi-hop reasoning: the ability to query one source, use that result to search another, and synthesize a conclusion.

Slow Report Turnaround

In professional research, the value of an insight decays the longer it takes to produce. If your time to insight is measured in weeks, you are likely losing opportunities to more agile competitors.

Workflow Comparison:

| Stage | Traditional Manual Process | Reasoning AI Workflow |

| Data Dragnet | 10 to 15 hours of manual reading | 30 seconds of automated ingestion |

| Logic Verification | Manual cross-referencing for errors | Real-time chain of thought audit |

| Drafting | 5 to 8 hours of writing | 10 minutes for a polished draft |

| Final Review | 3 hours of partner oversight | 45 minutes of strategic refinement |

Slow turnaround is rarely a talent issue; it is a structural one. When drafting becomes a hurdle, it is a sign you need an agentic system to handle the foundational 80% of report creation.

How Research Firms Can Monetize Reasoning AI?

Implementing a reasoning AI system transforms your firm from a labor-intensive service provider into a high-margin technology powerhouse. By decoupling revenue from human billable hours, you can scale insights infinitely.

Recent data shows that 80% of enterprise buyers are satisfied with their AI investments, and over 60% are willing to pay a premium for enhanced AI capabilities.

1. Subscription Research Platforms

Moving to a SaaS model provides continuous value. Instead of one off projects, you offer an “always on” portal where users query your knowledge base.

- The JPMorgan Case: JPMorgan’s COIN system handles document reviews that previously took 360,000 staff hours annually. By offering similar specialized tools via subscription, firms can capture a portion of the $52 billion AI agents market.

- Tiered Access: Offer basic summaries at a lower entry point while reserving multi-hop synthesis for premium tiers.

- The ROI: Early adopters of reasoning agents report up to 55% higher operational efficiency and 35% lower costs.

2. AI-Generated Premium Reports

High-fidelity automated reporting allows you to increase output by 5x to 10x without hiring more staff. These can be sold as premium digital products or value-added extras.

- Efficiency Example: CarMax used reasoning AI to summarize 100,000 customer reviews into 5,000 highlights. A task that would have taken 11 years manually was completed in months.

- The Multiplier Effect: IDC research shows that every dollar spent on AI solutions generates an average of $4.90 in value for the adopter.

Research firms using AI to improve sourcing team efficiency have seen revenue growth jump, such as Moglix’s increase from INR 12 crore to 50 crore per quarter after deployment.

3. API Access for Enterprise Clients

For large clients, the most valuable product is the data itself. Offering API access allows enterprise clients to plug your specialized intelligence directly into their internal dashboards.

- Market Traction: The AI software market is currently valued at over $134 billion, largely driven by companies’ packaging capabilities through APIs.

- Usage-Based Pricing: Follow the model of industry leaders like OpenAI or Anthropic by charging per token or query.

High-value moats are created when you license access to proprietary datasets combined with reasoning logic, much like Bloomberg’s proprietary data APIs.

Why Businesses Choose IdeaUsher for Research AI?

Choosing a partner for reasoning AI requires a team that understands the difference between a simple chatbot and a cognitive engine. At IdeaUsher, we build systems that do not just process words but execute logic.

With over 500,000 hours of coding experience, our team of ex-MAANG/FAANG developers brings a high-performance culture to every project, ensuring your AI is as rigorous as your senior analysts.

Enterprise Expertise

Our background in building large-scale products means we understand the complexities of professional workflows.

We do not just provide a tool; we deliver a product built on half a million hours of technical mastery. This depth allows our engineers to navigate the architectural challenges that often cause generic AI projects to fail.

Custom Model Training

We move beyond off-the-shelf solutions by performing deep fine-tuning and alignment.

Our team ensures your AI internalizes specific industry logic and vocabulary. Whether in finance, law, or medicine, we train models to mirror the exact analytical standards and evidentiary requirements your clients expect.

Secure Architecture

Security is a core pillar of our development process. We design scalable architectures that prioritize data sovereignty and regulatory compliance. Through private cloud deployments and robust encryption, we ensure your proprietary data remains protected as the system scales to handle millions of data points.

End-to-End Development

IdeaUsher provides a seamless journey from initial concept to full enterprise deployment. We handle every stage:

- Discovery: Mapping your unique research logic.

- Engineering: Building retrieval and reasoning pipelines.

- Deployment: Integrating AI into your existing tech stack.

- Optimization: Continuous refinement to maintain accuracy.

This comprehensive approach allows your firm to transition to an AI-augmented model without the friction of managing multiple vendors.

Conclusion

Building a domain-specific reasoning AI is no longer about simple automation; it is about scaling your firm’s intellectual capacity. By moving beyond basic chatbots to engineered systems that prioritize logic, traceability, and proprietary context, research firms can transform months of manual labor into hours of strategic insight.

Looking to Build a Domain-Specific Reasoning AI for Research Firms?

IdeaUsher can help you build a domain-specific reasoning AI that carefully understands technical research data and specialized terminology. Our team can design systems that analyze research paper datasets and knowledge graphs to evaluate evidence with structured reasoning.

With over 500,000 hours of coding experience, our elite team of ex-MAANG/FAANG developers knows exactly how to move your firm from slow, manual data processing to high-velocity, auditable intelligence.

Why partner with us?

- Elite Engineering: Leverage the expertise of developers who have built world-class systems at Google, Meta, and Amazon.

- Total Data Sovereignty: We build private, secure architectures that ensure your proprietary research stays yours.

- Logical Rigor: Our systems prioritize “Chain of Thought” reasoning, providing verifiable citations for every insight.

- Proven Scalability: With 500k hours under our belt, we build for the future, ensuring your AI scales as your data grows.

Check out our latest projects to see the kind of work we can do for you. Let’s build the future of your research firm together.

Work with Ex-MAANG developers to build next-gen apps schedule your consultation now

FAQs

A1: Constructing a pipeline requires forcing the model to evaluate logic sequentially. Engineers implement frameworks that break complex queries into smaller, verifiable chunks. Integrating a retrieval layer consistently provides factual grounding, preventing potential drift. This approach effectively ensures every output is logically sound and technically accurate.

A2: Models like Gemini 1.5 Pro or OpenAI o1 dominate because they natively use advanced chain-of-thought processing. These engines perform exceptionally well when paired with custom reward models that strictly penalize logical inconsistencies. These models are well-suited to high-stakes environments because they reliably handle multi-hop queries. Choosing the right base model is certainly critical for achieving precise analytical depth.

A3: Professional systems use deductive and inductive reasoning to draw specific or general conclusions from data. They also apply abductive reasoning to form the most likely explanation for incomplete facts. Finally, analogical reasoning allows the AI to draw useful parallels between historical cases and new research. Mastering these four logical frameworks enables an AI to simulate true cognitive depth.

A4: Generative AI primarily focuses on predicting the next likely word to create fluent text quickly. Reasoning AI, by contrast, prioritizes systematic verification of facts and logical steps before producing any final answer. While standard generators might occasionally hallucinate, a reasoning system will carefully audit its own internal path. This shift from creativity to rigorous logic is what makes these systems suitable for research.