Universities collect enormous volumes of operational data every single day, from enrollment trends to compliance rules and housing allocations, yet decisions still move slowly because interpretation remains fragmented across departments. Traditional dashboards only display insights while staff must manually connect the dots.

Many universities have started using multi-agent AI systems for higher education ops because these systems can intelligently coordinate reasoning across workflows and continuously analyze signals across units. As hybrid learning models and digital enrollment shifts increased complexity, institutions needed AI that could actively collaborate rather than simply respond, enabling operational clarity at scale.

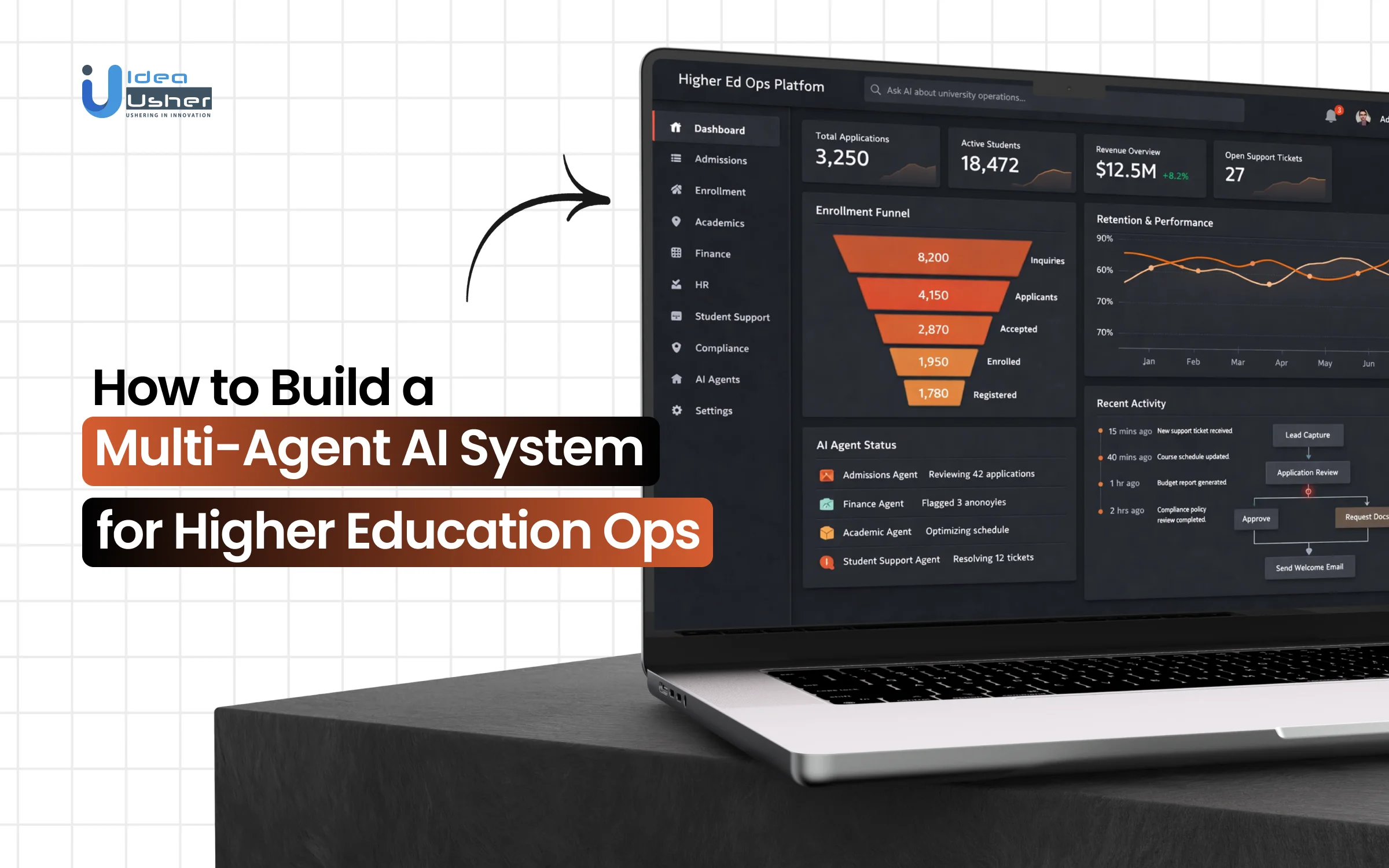

Over the years, we’ve developed multi-agent AI systems for numerous universities, powered by distributed agent architectures and retrieval augmented decision Intelligence frameworks. Given our expertise, we’re sharing this blog to discuss the steps to develop a multi-agent AI system for higher education ops.

Key Market Takeaways for Multi-Agent AI Systems

According to Dimension Market Research, the global Multi Agent System market is projected to reach USD 6.3 billion in 2025 and scale to USD 184.8 billion by 2034, growing at a CAGR of 45.5 percent. This rapid expansion reflects a strong shift toward distributed AI architectures that allow multiple intelligent agents to coordinate decisions across complex environments such as higher education operations.

Source: Dimension Market Research

In universities, multi-agent AI systems are increasingly used to streamline enrollment, advising, scheduling, and campus resource allocation. Instead of relying on a single automation layer, institutions deploy specialized agents that collaborate in real time, analyze institutional data, trigger predictive interventions, and support personalized student journeys.

Many institutions report measurable gains, including up to 78 percent improvement in resource utilization and significant reductions in manual administrative workload.

Leading examples highlight this transition. Arizona State University has launched enterprise-level AI initiatives that integrate multi-agent systems across advising, faculty productivity, and enrollment workflows. Staffordshire University introduced Beacon, a digital assistant built on coordinated agent logic to manage student queries and key academic services.

Through collaboration among Cintana Education, Arizona State University, and Amazon Web Services, open-source agentic AI tools are now supporting recruitment, tutoring, and student engagement across dozens of universities worldwide.

How Does a Multi-Agent AI System for Higher Education Ops Work?

A multi-agent AI system for higher education ops works as a coordinated digital layer that can route requests to specialized agents across academic and administrative systems. Each agent securely retrieves real-time data and shares it back for synthesis into a single accurate response. The system can then automatically execute approved tasks, improving efficiency and reducing manual workload.

The Core Architecture

At its heart, a multi-agent AI system is built on a simple principle: division of labor. Instead of one AI trying to do everything, the system comprises multiple specialized agents, each an expert in a specific domain.

Imagine a student inquiry about exam schedules and outstanding fees. A standard AI might get confused. A multi-agent system, however, deploys a coordinated team:

The Orchestrator Agent (The Conductor): This is the entry point for the user. When a student asks a question, the orchestrator’s job is to analyze the intent, triage the request, and route it to the correct specialist.

Specialized Subject Matter Expert (SME) Agents: These agents are the workers. They are connected to specific databases and knowledge bases. For example:

- An Admissions Agent handles queries about program requirements and application deadlines.

- A Finance Agent accesses billing systems to answer questions about tuition, fees, and financial aid.

- An Examinations Agent checks schedules, grade release dates, and exam policies.

- A CourseMate Agent helps students access course materials and simplifies access to academic resources.

How It Works: From Query to Resolution

The magic of a multi-agent system lies in its workflow. Here is a step-by-step look at how these systems process information, often leveraging a framework known as Retrieval-Augmented Generation.

Step 1: The Orchestration Layer

When a query hits the system, say, “What are the prerequisites for the AI course, and can I get an appointment with my mentor?”, the Orchestration Agent receives it. It breaks down the complex query into distinct tasks. It does not need to know the answer itself. It just needs to know who knows the answer.

Step 2: Retrieval and Specialization

The orchestrator routes the sub-queries to the relevant agents. The “Academic Support Agent” accesses the course catalog to pull prerequisite data. Simultaneously, a “Scheduling Agent” or “Mentor Support Agent” checks calendar availability.

The RAG Connection: Agents do not rely solely on their training data. They use RAG to pull real-time, accurate information from live vector databases connected to university systems, ensuring answers are current and sourced from official documents.

Step 3: Collaboration and Synthesis

The agents work in parallel. Once they have retrieved the necessary information, they send it back to the orchestrator. The orchestrator then synthesizes these disparate data points into a single, cohesive, conversational answer for the student.

Step 4: Action and Execution

Advanced multi-agent systems go a step further. They perform actions. This moves AI from “information provision” to “task execution.”

For instance, if a student asks to “Set up a call with my mentor,” an agentic workflow does not just find a time. It accesses both the student’s and mentor’s calendars, schedules the appointment, and sends a confirmation.

Types of Multi-Agent AI Systems Used in Higher Education Ops

Multi-agent AI systems in higher education are not one-size-fits-all solutions. Different operational challenges require different agent architectures, coordination patterns, and specialization levels. Understanding the taxonomy of these systems is essential for institutional leaders and IT decision-makers who need to select, build, or deploy the right agentic solution for their specific needs.

1. Hierarchical Multi-Agent Systems

Hierarchical systems operate like a traditional university organizational chart. A single “Supervisor Agent” coordinates and delegates tasks to subordinate specialist agents, which report back with results. The supervisor maintains the overall context and makes final decisions.

How They Work in Higher Education

- The Supervisor Agent: Sits at the top, receives the student or staff query, and creates a workflow plan

- Middle-Tier Agents: Department-level coordinators (e.g., College of Arts & Sciences Agent)

- Worker Agents: Specialized function agents at the bottom (e.g., Course Eligibility Agent, Prerequisite Checker Agent)

Ideal Use Cases

- University-Wide Student Portals: One central interface that needs to route queries to the correct department

- Centralized Advising Systems: Where a primary advisor agent coordinates with financial aid, registration, and career services specialists

- New Student Onboarding: Guiding students through a sequential, multi-step enrollment process

This architecture creates clear chains of command and responsibility, making it easier to audit interactions and trace decisions back through the system. The structure naturally aligns with how most universities are already organized, with clear departmental hierarchies and reporting lines.

2. Heterarchical Multi-Agent Systems

In heterarchical systems, all agents are considered peers with equal standing. They communicate directly with each other, negotiate tasks, and form temporary collaborations to solve problems. There is no permanent supervisor.

How They Work in Higher Education

- Agents discover each other’s capabilities through a registry service

- They negotiate task assignments based on current workload and expertise

- Temporary hierarchies form for specific tasks and dissolve when completed

Ideal Use Cases

- Research Collaboration Platforms: Where faculty agents from different departments need to share data and coordinate grant applications

- Cross-Departmental Problem Solving: Issues that require spontaneous collaboration between multiple offices

- Distributed Campus Systems: Large universities with semi-autonomous colleges and departments

This pattern creates highly resilient systems with no single point of failure. The architecture is inherently flexible and adaptive, making it ideal for problems that require input from multiple, unpredictable stakeholder combinations.

3. Orchestrator-Worker Multi-Agent Systems

This popular pattern features a dynamic “Orchestrator Agent” that receives a task, determines which specialist workers are needed, and manages their execution. Unlike a fixed hierarchy, the orchestrator adapts its team composition for each request.

How They Work in Higher Education

- The Orchestrator uses its understanding of the query to build a custom workflow

- It hires specialist agents on the fly for specific sub-tasks

- It monitors progress and may adjust the team if new needs emerge

Ideal Use Cases

- Complex Student Appeals: Where the needed departments vary by appeal type

- Personalized Learning Pathways: Assembling custom support teams for individual students

- Event-Driven Responses: Reacting to unexpected situations like academic probation or financial aid disruptions

4. Debate & Negotiation Multi-Agent Systems

These systems feature multiple agents that take different perspectives on a problem, debate the merits of various solutions, and reach consensus through structured argumentation. They are designed specifically to improve accuracy and reduce hallucinations.

How They Work in Higher Education

Multiple agents receive the same query but with different personas or system prompts

- They research independently using their assigned knowledge sources

- They engage in structured debates, challenging each other’s findings

- A moderator agent or voting mechanism determines the final response

Ideal Use Cases

Financial Aid Award Calculations: Where accuracy is paramount and errors have serious consequences

- Degree Audit and Graduation Checks: Ensuring all requirements are correctly interpreted

- Transfer Credit Evaluations: Complex determinations that require multiple perspectives

- Policy Interpretations: When university policies are ambiguous or have exceptions

5. Observer-Executor Multi-Agent Systems

These systems are designed for continuous monitoring and proactive intervention. Observer agents constantly analyze data streams for patterns and anomalies, while executor agents take action when specific conditions are detected.

How They Work in Higher Education

- Observer agents run continuously in the background, monitoring institutional data

- They compare current patterns against baseline models

- When anomalies exceed thresholds, they trigger executor agents

- Executor agents initiate outreach, interventions, or escalations

Ideal Use Cases

- Early Alert and Retention Systems: Detecting at-risk students before they fail

- Enrollment Funnel Monitoring: Tracking applicants through the admissions process

- Facility and Operations Management: Monitoring campus systems for issues

- Compliance Monitoring: Ensuring FERPA, Title IX, and other regulatory compliance

Observer-executor systems enable true proactive support operating 24 hours a day without human fatigue. They identify patterns and anomalies that human observers might miss, creating opportunities for early intervention before small issues become crises.

6. Federated Multi-Agent Systems

Federated systems connect agents across different institutions or secure data environments. Agents can collaborate without sharing raw data by using techniques such as federated learning and secure multi-party computation.

How They Work in Higher Education

- Each institution maintains its own agents and data within its security boundary

- Agents can query anonymized insights from other institutions

- They can participate in collective model training without exposing sensitive data

- Cross-institutional workflows are possible with strict privacy controls

Ideal Use Cases

- Consortium Research Projects: Multi-university research collaborations

- Transfer Student Pathways: Automating credit transfers between institutions

- Benchmarking and Analytics: Comparing institutional metrics without sharing raw data

- Shared Services: Small colleges sharing AI capabilities through consortia

7. Hybrid Multi-Agent Systems

Most real-world higher education deployments use hybrid architectures that combine elements from multiple patterns. These systems adapt their coordination style based on the task type, urgency, and available agents.

How They Work in Higher Education

- Simple queries use lightweight and direct agent-to-agent communication

- Complex workflows trigger orchestrator-based coordination

- High-stakes decisions invoke debate patterns for verification

- Background monitoring uses observer agents continuously

- The system dynamically selects the optimal pattern for each situation

Ideal Use Cases

- Comprehensive Student Success Platforms: Handling everything from simple questions to crisis interventions

- University-Wide Digital Transformation: Replacing multiple legacy systems with an integrated AI layer

- Future-Ready Institutions: Building flexible infrastructure that can adapt to emerging needs

How to Develop a Multi-Agent AI System for Higher Education Ops?

Developing a multi-agent AI System for Higher Education Ops starts with a secure orchestration layer that defines agent communication and data access. Each agent should be department-scoped so that workflows can run autonomously while governance remains intact.

We have delivered multiple multi-agent AI systems for higher education ops, and here is our structured approach.

1. Architecture Design

We start by defining how agents will coordinate across the institution. Depending on governance needs, we design either a centralized orchestrator or a choreographed agent model. We establish secure communication protocols, strict access controls, and compliance-first message routing to protect student and financial data. This ensures the system is structurally sound before intelligence is layered in.

2. Domain Agents

We build scoped agents aligned to departmental responsibilities. Admissions agents integrate with CRM systems, financial aid agents connect to rule engines, registrar agents enforce prerequisite logic, and compliance agents validate outputs against verified policy repositories. Each agent operates within clearly defined data boundaries to meet regulatory standards.

3. Workflow State

Because university processes extend across semesters, we implement event-driven architectures and workflow state machines. Persistent memory layers combining vector and structured databases maintain context and transactional history. This allows enrollment tracking, audits, and academic progression monitoring to remain consistent over time.

4. Review Protocol

To protect institutional credibility, we deploy a drafting agent paired with a compliance auditor agent. Outputs are validated through structured verification checks and conflict-resolution logic between agents. This reviewer-critic framework prevents policy misalignment and strengthens governance oversight.

5. Smart Routing

We implement a router agent that classifies intent and selects the appropriate model tier. Smaller models handle routine queries, while larger models process complex academic or financial cases. Human escalation triggers are embedded for sensitive situations, ensuring administrative control remains intact.

6. Multi-Modal Fusion

We integrate voice processing, document OCR, and structured data extraction into the agent ecosystem. Event triggers connect these capabilities so transcripts, calls, and policy documents flow seamlessly between agents. This creates a unified, format-agnostic operational system for higher education environments.

Multi-Agent AI System Managing Long-Running Workflows

A multi-agent AI system manages long-running workflows by persistently storing state and restoring context across sessions. It can reliably resume processes that span weeks or semesters without losing progress. This allows universities to efficiently automate complex operations while maintaining compliance and accuracy.

1. The Challenge of Temporal Workflows

Higher education presents unique challenges for AI systems because its core processes are inherently long-running and state-dependent.

The Temporal Spectrum of Educational Workflows

| Workflow Duration | Example Processes | Key Challenges |

| Minutes to Hours | Course registration, transcript requests | Real-time system integration |

| Days to Weeks | Transfer credit evaluation, financial aid appeals | Maintaining interim state, document collection |

| Weeks to Months | Admissions review cycles, semester progression tracking | Cross-session memory, policy changes |

| Semesters to Years | Degree audits, persistence monitoring, and graduation clearance | Longitudinal context, requirement evolution |

A student might begin a transfer credit evaluation in March, upload documents piecemeal through April, receive decisions in May, and finally enroll in June, all while interacting with different agents across multiple channels.

The system must remember every interaction, maintain progress across each step, and pick up exactly where it left off after weeks of silence.

Traditional chatbots fail at this task because they treat each interaction as isolated. Multi-Agent systems succeed because they are designed with persistence, state machines, and long-term memory as foundational elements.

2. Persistent State Management

At the heart of any long-running Multi-Agent system is the ability to maintain state across sessions, interruptions, and extended time gaps.

Session Persistence Across Extended Timeframes

Modern agentic platforms recognize that educational workflows cannot be confined to single chat sessions.

Amazon Bedrock AgentCore Runtime, for example, provisions dedicated microVMs that can persist for up to 8 hours, enabling sophisticated multi-step workflows in which each subsequent call builds on accumulated context and state from previous interactions.

But eight hours is not enough for workflows that span weeks. For truly long-running processes, the system must write state to durable storage that survives beyond the lifetime of any single agent instance.

State Machine Architecture for Educational Workflows

The University of Kentucky’s SmartState system offers a compelling model for managing extended educational workflows using finite-state machines.

FSMs model system behavior as a series of transitions between well-defined states, where specific conditions must be met for a transition to occur. Once an FSM is created, the paths defined in the graph are fixed and cannot be deviated from.

For a transfer credit evaluation workflow, the state machine might include:

- State 1: Document Intake. Waiting for transcript upload

- State 2: Document Processing. OCR and data extraction in progress

- State 3: Evaluation Pending. Awaiting departmental review

- State 4: Decision Rendered. Credits awarded or denied

- State 5: Appeal Window. Student can contest the decision (14-day timer)

Each state transition is triggered by specific events, document upload, completion of automated processing, manual reviewer action, or timer expiration. The state machine ensures that no step is skipped and that the workflow progresses logically.

Automatic State Persistence

For long-running workflows, it’s critical to survive infrastructure failures. SmartState addresses this through automatic checkpointing.

SmartState automatically saves its internal state to stable storage every 15 minutes, safeguarding against data loss during server and network disruptions.

This means that even if a server crashes mid-process, the system can restore the exact state from the last checkpoint and continue without losing progress, which is essential for workflows that may have taken weeks to reach their current point.

3. Multi-Layer Memory Architecture

Long-running workflows require memory that operates at multiple timescales.

Short-Term Memory for Session Context

Short-term memory maintains context within a single interaction or across closely spaced interactions. It tracks the current conversation, recent queries, and immediate objectives. This is typically implemented through conversation buffers that keep recent messages in context windows.

Long-Term Memory for Cross-Semester Continuity

For workflows spanning weeks or semesters, agents need long-term memory that persists across sessions and even across different agent types.

Amazon Bedrock AgentCore Memory provides managed, context-aware memory services for both short-term and long-term retention.

This enables AI learning assistants and advisors to remember individual student progress, student preferences, semantic facts, interactions, and educational journeys over time, so every interaction is tailored for sustained learning success.

When a student returns after a three-week absence to check on their financial aid appeal, the agent can immediately recall the entire history, what documents were submitted, which steps remain, and any notes from previous interactions, without the student needing to repeat themselves.

Memory Types in Agentic Systems

| Memory Type | Duration | Purpose | Implementation |

| Episodic Memory | Session | Recall recent conversations | Conversation buffers |

| Semantic Memory | Persistent | Store facts about student | Vector databases |

| Procedural Memory | Permanent | Workflow rules and policies | State machines, policy engines |

| Working Memory | Minutes | Current task context | In-memory session state |

4. Orchestration Patterns for Extended Workflows

Managing long-running workflows requires sophisticated orchestration to coordinate multiple agents over extended timeframes.

The Orchestrator-Worker Pattern

In this pattern, a central orchestrator agent maintains the overall workflow state and delegates specific tasks to specialized worker agents. The orchestrator persists between sessions, while workers are instantiated as needed for specific subtasks.

For a degree audit workflow spanning multiple semesters:

- Orchestrator maintains the student’s complete academic record and degree requirements

- The Course Completion Agent updates the record each semester with new grades

- The Requirement Checker Agent periodically evaluates progress against degree requirements

- Advisor Alert Agent notifies human advisors when students approach critical milestones

The orchestrator ensures that even though different agents handle different tasks at different times, the overall workflow remains coherent and consistent.

Event-Driven Architecture for Asynchronous Workflows

Long-running workflows are inherently asynchronous. Students upload documents at 2 AM; financial aid officers approve appeals three days later; semester boundaries trigger new requirement calculations.

An event-driven architecture using publish-subscribe patterns enables agents to respond to asynchronous events without polling.

When a document is uploaded, a document.uploaded event is published. The Document Processing Agent subscribes to this event and begins extraction. When extraction completes, a document.processed event triggers the Evaluation Agent. This decoupling allows workflows to unfold over time without requiring synchronous connections between steps.

5. Checkpointing and Recovery Mechanisms

For workflows that span weeks, system reliability becomes paramount. Agents must be designed to recover gracefully from failures without losing progress.

Persistent Agent Group State

The Concordia agent framework demonstrates an approach where agent groups can persist their membership and state to durable storage.

An agent group may optionally save its membership to persistent storage and restore it upon restart. In this way, an agent group’s membership survives even when the group fails.

In higher education, this means that even if the entire agent system needs to be restarted for maintenance or recovers from a crash, every in-progress workflow, every transfer credit evaluation, every financial aid appeal, and every persistence monitoring thread resumes exactly where it left off.

Proxy Patterns for High Reliability

For mission-critical workflows, proxy patterns provide an additional layer of reliability.

A proxy for an agent group maintains a persistent reference to the group. If the agent group fails, the proxy will be unable to communicate with it and will acquire a new reference to it.

This shields higher-level workflows from the failure of individual components. If the Financial Aid Agent group goes down, the proxy automatically reconnects to a new instance, and the overall enrollment workflow continues uninterrupted.

Checkpoint Granularity

The appropriate checkpoint frequency depends on the cost of losing work versus the performance impact of saving state. For most educational workflows, checkpointing at key transition points, after document upload, before human review, after decision rendering, provides an optimal balance.

6. Temporal Awareness

Long-running workflows often require actions to occur at specific times or after elapsed intervals.

Scheduled Automations

OpenAI’s Codex platform demonstrates how scheduled automations can handle routine tasks that recur over extended periods. The app also supports scheduled automations. These allow repetitive or routine tasks to run in the background, with results delivered to a review queue.

In higher education, scheduled automations might include:

- Weekly persistence checks — Observer agents analyze engagement metrics every Sunday

- Monthly progress reports — Degree audit agents generate summaries for advisors

- Semester boundary triggers — Course completion agents finalize grades and update records

- Deadline reminders — Financial aid agents notify students of approaching cutoff dates

Timer-Based State Transitions

State machines can incorporate timers to automatically transition between states when time thresholds are reached. For example:

- If a student hasn’t uploaded the required documents within 14 days, the Financial Aid Agent sends a reminder and extends the deadline by 7 days

- If there is no response after 21 days, the workflow escalates to a human advisor for follow-up

- After 30 days of inactivity, the application is archived with notification to the student

These timer-driven transitions ensure that workflows do not stall indefinitely and that students receive appropriate follow-up without requiring constant human oversight.

How Does a Multi-Agent AI System Manage Decision Accountability?

A multi-agent AI system manages accountability by securely logging each agent’s action and data input in an auditable decision trace. Every output should be explainable through technical attribution so administrators can clearly review the reasoning. Human validation must oversee high-impact decisions, which ensures responsibility is properly assigned and compliance is consistently maintained.

1. The Accountability Imperative

Accountability in Multi-Agent systems is fundamentally different from accountability in traditional software or even single-agent AI chatbots. When multiple specialized agents collaborate autonomously to make decisions, the chain of responsibility becomes distributed and potentially opaque.

Why Accountability Matters

| Stakeholder | Accountability Concern |

| Students | “Who do I appeal to if an agent denies my financial aid or misadvises me on graduation requirements?” |

| Faculty & Advisors | “Am I responsible for decisions made by AI agents that I supervise?” |

| Administrators | “How do I demonstrate compliance with FERPA, accreditation standards, and institutional policies?” |

| Regulators | “Can the institution prove that its AI systems make fair, unbiased, and well-documented decisions?” |

| IT Leadership | “How do we audit and debug decisions when something goes wrong?” |

The Association for Learning Technology emphasizes that accountability in educational AI requires moving “from compliance to confidence” by building systems that not only meet regulatory requirements but also earn the trust of all stakeholders through transparency and verifiability.

The Governance Gap

As one education consultant notes, “AI is no longer a future concept because it already plans lessons, marks work, and writes feedback. Yet few colleges can say who, at board level, owns the ethical, operational or learner-impact risks of these systems“.

This governance gap represents the single greatest vulnerability in educational AI deployments.

The solution lies in architecting accountability directly into Multi-Agent systems, creating what researchers call “auditable agentic workflows” where every decision leaves a trace that can be examined, explained, and attributed.

2. Architectural Foundations for Accountability

Accountability cannot be retrofitted to Multi-Agent systems. It must be built into their core architecture from the ground up.

The Four Pillars of Accountable Agentic AI

| Pillar | Description | Key Mechanisms |

| Traceability | Every decision can be traced back to its originating agent and contributing factors | Decision traces, agent attribution, input logging |

| Explainability | The rationale for decisions can be understood by humans | XAI methods (SHAP, LIME), natural language explanations |

| Auditability | Decisions can be verified independently after the fact | Immutable records, cryptographic hashing, timestamping |

| Attributability | Responsibility for decisions can be assigned to specific agents and human overseers | Agent identities, human-in-the-loop logs, override tracking |

The Blockchain-Assisted Approach

One of the most promising architectures for accountable AI comes from research on Blockchain-Assisted Explainable Decision Traces BAXDT. This approach creates comprehensive, immutable records for each AI decision by integrating:

“Model outputs, SHAP-based XAI summaries, a novel Explanation Density Metric, and detailed model/data context into a unified JSON trace. The BAXDT architecture leverages blockchain by recording a cryptographic hash of each decision trace on-chain, while the full trace is stored off-chain”.

When a Multi-agent system makes a high-impact academic decision, it can create a secure, tamper-proof trace of the data and model logic used.

A blockchain hash may protect the integrity of that record while auditors can still access the full details off-chain. Validated frameworks like BAXDT can reliably support accountable decisions in student performance and other critical areas.

3. The Human-in-the-Loop

No matter how sophisticated Multi-Agent systems become, accountability ultimately rests with humans. The most effective architectures, therefore, build human oversight into the decision workflow, not as an afterthought but as an integral component.

The AdvisingWise Model

Stanford University developed the AdvisingWise system to exemplify this principle in academic advising. This multi-agent framework automates time-consuming tasks like information retrieval and response drafting while preserving human oversight through a mandatory validation step:

“All system responses undergo human advisor validation before delivery to students”.

In user studies with academic advisors, this approach proved transformative. Advisors’ “initial concerns about reliability and personalization diminished” after using the system, as they retained final control over every communication sent to students.

The Moderation Loop Pattern

Research on orchestrated AI assistants for doctoral supervision proposes a similar pattern called the “student-initiated moderation loop”:

“Assistant outputs are routed to a supervisor for review and patching, while keeping authorship and final judgement with people”.

This pattern ensures that while AI agents handle the heavy lifting of research, synthesis, and drafting, human supervisors maintain accountability for final decisions and communications.

The Laila AI Dualistic Governance Model

The Laila AI system implemented at the Arab Academy for Science and Technology demonstrates what researchers call a “dualistic model of governance”. This approach combines:

- Independent decision reasoning: Agents autonomously process information and generate recommendations

- Built-in moral control: Human reviewers must approve consequential actionsns.

The results are striking. In the Laila AI deployment, “override and justification features provided active involvement of human reviewers, which supported the ethical dimension of governance”. Stakeholders reported “perceived gain in fairness, explainability, and trust” precisely because they knew humans were ultimately accountable.

4. Explainability

For accountability to be meaningful, decisions must be explainable. This applies not just to technical experts but to students, advisors, and regulators.

SHAP-Based Explanations

The BAXDT framework employs SHAP SHapley Additive Explanations to quantify how individual inputs influence specific decisions.

For a financial aid decision, for example, SHAP values would reveal how factors such as family income, enrollment status, and academic standing contributed to the final award.

The Explanation Density Metric

A novel contribution of the BAXDT research is the Explanation Density Metric EDM, which measures “how much of the total feature space is required to achieve 80% cumulative SHAP contribution in a given decision explanation”.

This metric serves as an indicator of the explanation’s density and, by extension, the interpretability of the system.

For higher education accountability, the EDM helps answer a critical question. Was this decision based on a few key factors that are transparent and easily explainable, or hundreds of subtle variables that are opaque and difficult to justify?

Natural Language Explanations

Beyond quantitative metrics, accountable Multi-Agent systems must generate human-readable explanations. The AdvisingWise system, for instance, produces responses that advisors can review and modify before sending to students, ensuring that explanations are both accurate and comprehensible.

5. Digital Footprints

Accountability requires the ability to reconstruct decisions months or even years after they were made. This demands sophisticated traceability mechanisms.

Agent Attribution

Every decision in an accountable Multi-Agent system must be attributable to the specific agent or agents that produced it. As Chinese universities implementing campus-wide agentic systems have discovered, “establishing clear ‘digital footprints’ for agents is essential for locating the root cause of problems”.

When a student receives incorrect advising about graduation requirements, the institution must be able to determine:

- Which agent generated the initial recommendation?

- Which knowledge sources did it consult?

- Which other agents contributed to the final response?

- Which human reviewers, if any, approved it?

Input and Context Logging

Accountability extends beyond the decision itself to the context in which it was made. The BAXDT framework captures:

“Metadata about the model that generated the decision (such as the dataset name and characteristics, model type/version, and core training performance metrics) as well as contextual information specific to the decision itself (e.g., timestamp, input data hash)”.

This ensures that when auditors examine a decision years later, they can verify not only what was decided and why, but also what data and models were available at the time.

Immutable Audit Trails

The cryptographic hashing approach in BAXDT ensures that once a decision trace is created, it cannot be altered without detection. This is crucial for regulatory compliance and for maintaining trust in the system over time.

Conclusion

Multi-agent AI systems mark a clear shift in how higher education operations should be designed and managed. Institutions can move beyond reactive chat interfaces and gradually build coordinated agent networks that continuously analyze data and execute workflows with measurable impact on revenue retention and administrative efficiency. For enterprise leaders and platform builders, this may become a defining infrastructure decision because those who invest early will likely set the operational benchmarks for the next-generation campus.

Looking to Build a Multi-Agent AI System for Higher Education Ops?

Idea Usher helps higher education institutions replace fragmented systems with intelligent Multi-Agent AI that orchestrates admissions, automates financial aid, and predicts student dropouts before they happen.

Why Us?

- 500,000+ hours of coding experience building production-ready AI systems

- Ex-MAANG and FAANG developers who have built at Google, Amazon, and Microsoft scale

- Multi-agent architects specializing in LangGraph, CrewAI, and enterprise RAG

What We Deliver

- Agentic Workflows that connect SIS, LMS, and CRMs and end the administrative runaround

- Persistence Engines that boost retention by catching at-risk students early

- Institutional Truth Layers with built-in FERPA compliance and hallucination guards

- Human in the loop design so AI assists and never decides alone

Work with Ex-MAANG developers to build next-gen apps schedule your consultation now

FAQs

A1: A chatbot handles isolated questions and returns predefined or model-generated answers without a deeper operational context. A multi-agent AI system orchestrates multiple specialized agents that share memory and coordinate actions across departments, so it can autonomously execute workflows rather than simply respond to messages.

A2: A properly engineered multi-agent AI system can securely manage sensitive student data through role-based access control, encrypted data pipelines, audit logs, and embedded compliance agents that validate every action against FERPA and institutional policies. With strong governance layers in place, the system can reliably operate within strict regulatory boundaries.

A3: A focused pilot can typically be delivered within 8 to 12 weeks if the integration scope is clearly defined and core workflows are prioritized. Full-scale deployment may gradually extend beyond that depending on data readiness, legacy system complexity, and security validation cycles.

A4: Yes, a middleware orchestration layer can securely connect the agent network to existing SIS, LMS, and CRM platforms through APIs and event driven connectors. This approach allows institutions to modernize operations without replacing their core systems while still enabling coordinated autonomous execution.