The creator economy promised independence but it gradually created constant production pressure where you must publish frequently and adapt to algorithm shifts. Not everyone can stay on camera and manage filming, editing and distribution every week. That imbalance created a production bottleneck even when creative capacity was strong.

More and more people have started using AI avatar video generation platforms because they can reduce production time, lower recurring studio costs, and maintain consistent on screen presence without physical recording. These systems can also support multilingual output and rapid content iteration, which makes scaling significantly easier.

We’ve developed numerous AI avatar video generation platforms that use technologies like multimodal generative AI architectures and neural rendering pipelines. As IdeaUsher has this expertise, this blog outlines the step by step process to develop an AI video generation platform the right way.

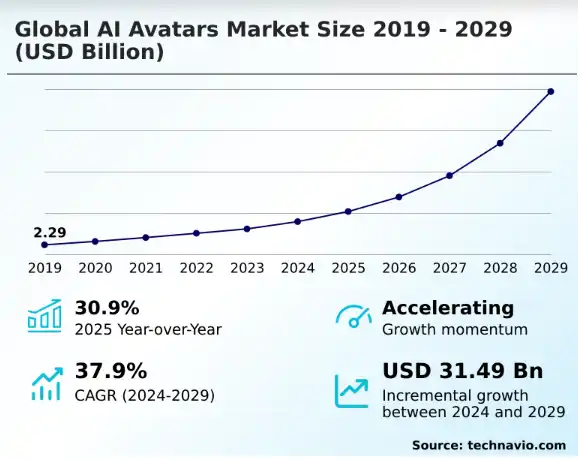

Key Market Takeaways for AI Avatar Video Generation Platform

According to Technavio, the AI avatar market is expanding at remarkable speed, with projections showing an additional USD 31.49 billion in growth at a CAGR of 37.9 percent between 2024 and 2029. This surge is closely tied to rapid advances in generative AI, which now make it possible to produce realistic digital presenters without studios, cameras, or actors. For many organizations, AI avatars are no longer experimental tools but practical infrastructure for scalable communication.

Source: Technavio

Demand is rising because businesses need consistent, multilingual, and personalized video content across marketing, training, and customer support. Platforms such as Synthesia and HeyGen have become enterprise favorites by offering lifelike avatars, accurate lip sync, and simple text to video workflows.

Their adoption reflects a broader shift toward digital humans across retail, edtech, and media, where speed and localization matter as much as production quality.

Strategic partnerships are further accelerating this growth. Synthesia has collaborated with DeepL to enable instant multilingual video creation, allowing companies to localize content at scale.

HeyGen has also partnered with Faye to integrate avatar driven video into CRM workflows, helping brands deliver personalized and on brand communication. These alliances show how ecosystem integration is becoming central to innovation in AI video technology.

What is an AI Avatar Video Generation Platform?

An AI avatar video generation platform is a system that uses artificial intelligence to create realistic or stylized digital avatars that can speak, present, or deliver content in video form without requiring a human to be physically recorded.

It typically combines generative AI models, text to speech engines, facial animation synthesis, and computer vision to convert written scripts into synchronized lip movements and natural expressions. Instead of traditional video production, you can generate scalable, personalized, and on demand videos by simply providing text inputs and selecting an avatar persona.

Key Features of an AI Avatar Video Generation Platform

An AI avatar video platform should convert scripts into realistic talking avatars with accurate lip sync and real time rendering. It can support voice cloning multilingual output and gesture control so content may scale efficiently. The best systems deliberately combine neural rendering with structured editing tools for fast and consistent production.

1. Text to Avatar Script Input

Users can paste a script and the platform will instantly convert it into a talking avatar video with synchronized speech and facial motion. The system should generate real time previews so iterations can happen quickly without manual editing similar to how Synthesia handles script to video rendering. This feature significantly reduces the production cycle for training and marketing content.

2. Avatar Library Selection

Users can browse a diverse avatar library and preview each character delivering sample lines before selection. Smart filters should allow sorting by language support realism level and industry context much like the structured avatar catalog in HeyGen. This makes scalable personalization technically efficient for enterprise use cases.

3. Custom Avatar Creation

Users can upload a photo or short clip and the platform will generate a hyper realistic digital twin with matched facial structure and expressions. The system should allow refinement of clothing posture and background through structured prompts similar to the custom avatar workflows offered by DeepBrain AI. This enables branded spokespersons without studio recording.

4. Voice Cloning and Selection

Users can record a short sample and the engine will clone the voice with accurate tone modeling and lip sync alignment. Alternatively they can select from multiple synthetic voices and adjust pitch speed and emotional tone as seen in platforms like Colossyan. This ensures consistent brand voice across multilingual campaigns.

5. Multi Language Translation

Users can input content in one language and the platform will translate dub and subtitle it into multiple languages with accent adaptation. The editor should allow instant switching between languages similar to the multilingual pipeline used by Synthesys. This supports global distribution without separate production workflows.

6. Gesture and Expression Controls

Users can adjust gesture intensity facial expressions and head movement through preset controls or sliders. The rendering engine should synchronize these movements with phoneme timing for natural delivery similar to expressive control layers in Hour One. This transforms static avatars into expressive digital presenters.

7. Template and Editing Canvas

Users can start with structured templates and enhance videos using drag and drop editing tools for overlays transitions and media layers. The canvas should support multi format export and performance preview metrics similar to the editing environment provided by Elai. This allows rapid production while maintaining visual consistency and engagement.

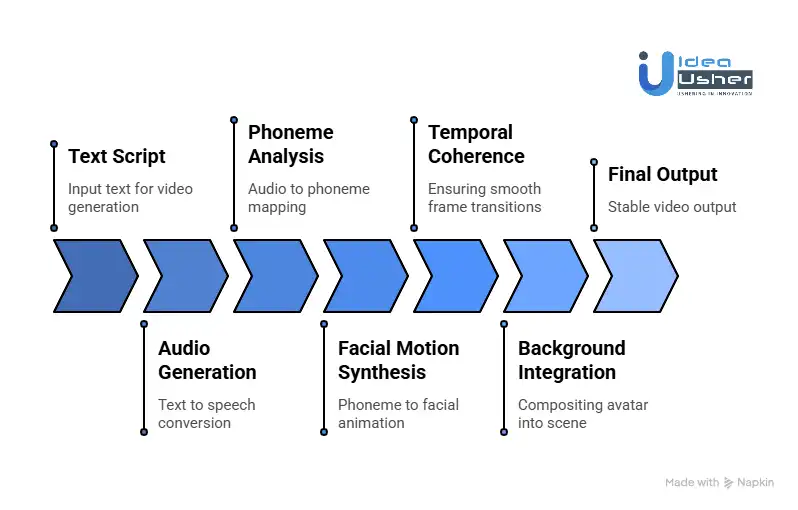

How Does an AI Avatar Video Generation Platform Work?

An AI avatar video generation platform converts your text into speech using neural text to speech models, then precisely maps that audio into phonemes so the system can predict how the lips and facial muscles should move.

It then uses 2D warping or 3D model based neural rendering to generate synchronized facial motion and finally composes each frame into a stable video output.

The High Level Pipeline

Before diving into individual components, let us understand the end to end workflow. An AI avatar platform typically processes video generation through five distinct stages:

Text Script → Audio Generation → Phoneme Analysis → Motion Synthesis → Video Rendering → Final Output

Each stage represents a specialized AI model or algorithmic process. Let us explore each one in detail.

1. Audio Generation

The journey begins with text. But modern platforms do not just convert text to speech. They convert text to performance.

How it works:

- Neural Text to Speech models like Tacotron, FastSpeech, or VALL E analyze the text for linguistic features such as punctuation, emphasis, sentiment, and context.

- The model generates a Mel spectrogram which is a visual representation of sound frequencies over time.

- A vocoder like HiFi GAN or WaveGlow converts this spectrogram into raw audio waveforms.

Modern systems do not just read words. They interpret them. The model identifies emotional cues in the text such as excitement, concern, or authority and modulates pitch, pace, and tone accordingly.

A sentence like “We’re launching a breakthrough product” receives a different vocal signature than “We regret to inform you…”

2. Phoneme Analysis

Once we have audio, we need to tell the avatar how to move its mouth. This is where phonemes come in.

What are phonemes?

Phonemes are the distinct units of sound in a language. English has about 44 phonemes such as “th,” “sh,” and “ee.” Each phoneme corresponds to a specific mouth shape.

How it works:

- The audio is passed through a phoneme recognition model often based on Wav2Vec or similar architectures.

- The model aligns each phoneme with a timestamp such as “At 0.2 seconds, say ‘M’; at 0.3 seconds, say ‘AH’…”

- These phonemes are mapped to visemes which are the visual counterparts of a sound.

The Technical Nuance

In real speech, your mouth does not snap from one shape to another. It flows. If you say “BOO,” your lips purse for the B and stay rounded for OO. If you say “BEE,” your lips stretch for EE. This blending is called coarticulation.

Advanced platforms use LSTM networks or Transformers to predict not just which viseme appears, but how the mouth should transition between them, creating fluid and natural movement.

3. Facial Motion Synthesis

This is the heart of the platform. The system must now animate a static image or 3D model to match the audio, complete with realistic movements of the lips, jaw, eyes, and eyebrows.

There are two primary technical approaches:

Approach A: 2D Warping Based Animation

How it works:

- The platform starts with a single reference image of the person.

- A landmark detection model like MediaPipe or OpenPose identifies key facial points such as corners of the mouth, edges of the eyes, and jawline.

- As phonemes arrive, a warping model often based on Thin Plate Splines or optical flow stretches and moves these landmarks.

- The surrounding pixels are interpolated to fill the gaps smoothly.

Strengths: Fast and requires minimal training data, just one photo.

Weaknesses: Limited head movement, can struggle with side profiles, and may cause occasional distortion.

Approach B: 3D Model Based Animation

How it works:

- A 3D Morphable Model or 3DMM is created from multiple reference images or video.

- This model contains a mesh of thousands of vertices forming a 3D face.

- As phonemes and emotions are detected, the system adjusts the vertices according to blend shapes which are predefined facial expressions such as smile, frown, or eyebrow raise.

- The 3D model is then rendered with realistic textures and lighting.

Strengths: Full head movement, consistent identity from any angle, and precise control.

Weaknesses: Requires more training data and is computationally heavier.

Cutting edge platforms now use Neural Radiance Fields or 3D Gaussian Splatting. Instead of a traditional 3D mesh, they train a neural network to represent the person as a continuous volume. When you want a new angle or expression, the network queries this volume to generate the exact pixels needed. The result is photorealistic quality with natural lighting and skin texture.

4. Temporal Coherence

One of the biggest technical challenges is preventing the video from flickering or melting between frames.

The Problem:

Most AI models generate video frame by frame. Without memory between frames, the avatar’s skin texture, hair, or background might change subtly each time, creating an unsettling flicker known as semantic noise.

The Solution: Temporal Coherence Mechanisms

Approach 1: Autoregressive Generation

The model uses previous frames as input when generating the next frame. It remembers that the left cheek should look like it did 0.1 seconds ago.

Approach 2: Reference Sinks

A high quality reference frame is stored in memory. All subsequent frames are generated as variations from this anchor, ensuring consistent identity throughout the video.

Approach 3: 3D Anchoring

By using a 3D model as the underlying representation, the system guarantees that the nose, eyes, and mouth maintain their spatial relationships across all frames. The 2D video is simply a render of a stable 3D object.

5. Background Integration and Scene Composition

The avatar rarely exists in isolation. Platforms must composite the animated face into a scene.

How it works:

- Matting models such as BackgroundMattingV2 separate the avatar from its original background.

- The foreground is layered onto a new background which could be static, video, or even AI generated.

- Lighting harmonization adjusts the avatar’s colors to match the new environment such as warm lighting in a cozy room or cool tones in an office.

- For full body avatars, pose estimation models ensure the body language matches the tone of the speech.

The Training Pipeline

Creating a new avatar whether from a single photo or video footage requires a separate training process.

Scenario A: Single Photo Avatar Zero Shot

Process:

- A face parsing model identifies facial features in the photo.

- A generative model like StyleGAN or diffusion models is conditioned on this photo.

- The model learns the latent code that represents this specific person.

- During generation, this latent code is combined with motion vectors from a driving video or audio.

Limitation: Limited head movement and cannot show the back of the head or extreme angles.

Scenario B: Video Trained Avatar Few Shot

Process:

- The user provides 3 to 5 minutes of video talking to camera with various expressions.

- The platform extracts frames and performs 3D reconstruction to build a geometric model.

- A texture model learns the skin appearance under different lighting.

- An expression model maps the person’s unique way of smiling or frowning.

- All three components are combined into a unified digital twin.

Result: Full head movement, profile views, consistent identity, and personalized expressions.

Content Provenance and Ethics

As AI avatars become indistinguishable from humans, platforms must implement safeguards.

C2PA Metadata Embedding

The Coalition for Content Provenance and Authenticity standard allows platforms to cryptographically sign generated videos. Viewers can verify that the video was AI generated by checking its digital signature.

Invisible Watermarking

Frequency domain watermarks are embedded in the video pixels. These survive compression, screen recording, and social media re encoding, providing a persistent fingerprint.

Liveness Verification

Before allowing voice or face cloning, platforms require users to perform a liveness check such as turning their head, blinking, or speaking random phrases to prove they own the identity they are cloning.

How to Develop an AI Avatar Video Generation Platform?

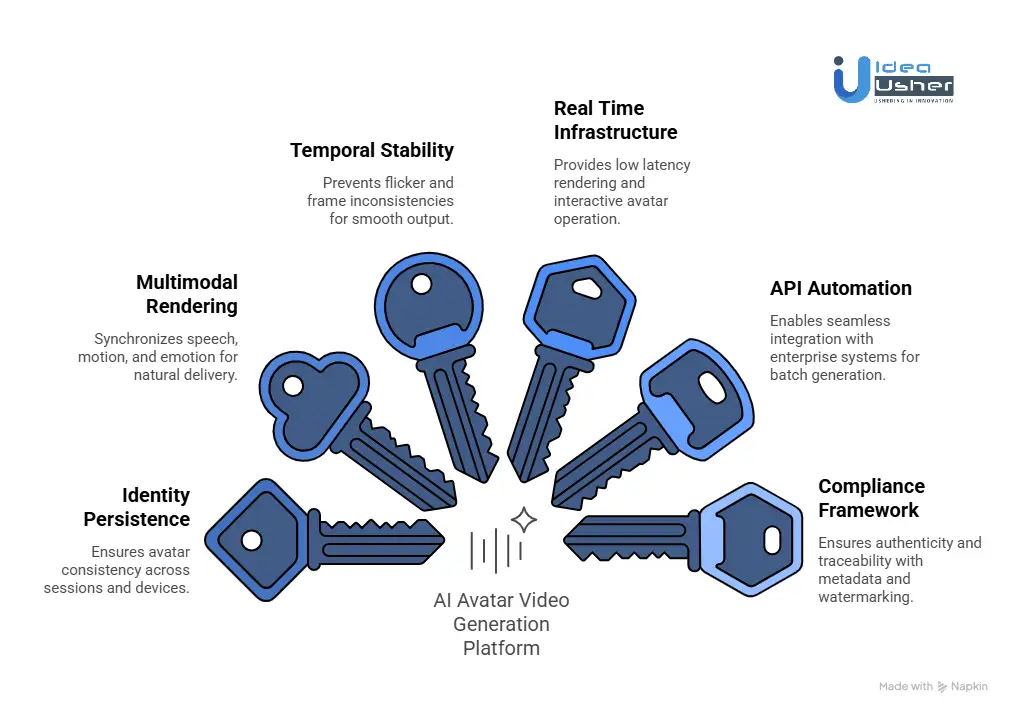

Developing an AI avatar video generation platform requires a persistent identity engine that preserves facial geometry and voice signatures across sessions. A synchronized multimodal pipeline must align speech synthesis motion modeling and temporal stabilization for coherent video output.

We have built multiple AI avatar video generation platforms over the years, and this is the structured approach we follow.

1. Identity Persistence

We design a persistent identity engine that locks avatar geometry, facial structure, texture response, lighting behavior, and voice signature into structured embeddings. This ensures the avatar remains consistent across sessions, campaigns, and devices without visual or tonal drift. For our clients, this transforms the avatar into a reusable digital asset with long term brand continuity.

2. Multimodal Rendering

We build a tightly orchestrated rendering pipeline that synchronizes text to speech, lip movement, emotion modeling, gesture generation, and scene composition. Our system aligns phoneme timing with micro expressions to create natural human like delivery. Instead of combining isolated AI outputs, we engineer a unified inference workflow that produces cohesive performance.

3. Temporal Stability

To prevent flicker and frame inconsistencies, we implement temporal anchoring techniques such as frame caching and diffusion based stabilization. This keeps facial identity, motion flow, and lighting transitions consistent across frames. The result is smooth cinematic output suitable for professional and enterprise deployment.

4. Real Time Infrastructure

We deploy GPU accelerated inference nodes optimized for low latency rendering and concurrent usage. By integrating WebRTC streaming and edge delivery layers, we ensure avatars can operate interactively across regions. Our infrastructure is designed to scale without compromising responsiveness or visual quality.

5. API Automation

We architect the platform with API first principles so it integrates seamlessly with CRMs, LMS systems, and enterprise automation tools. Secure endpoints enable batch generation, triggered video creation, and scripted workflows. This allows our clients to embed avatar generation directly into their operational pipelines.

6. Compliance Framework

We embed compliance safeguards directly into the architecture before enabling voice or face cloning features. Our implementation includes C2PA metadata tagging, cryptographic watermarking, and consent verification systems. This ensures authenticity, traceability, and alignment with evolving AI governance standards.

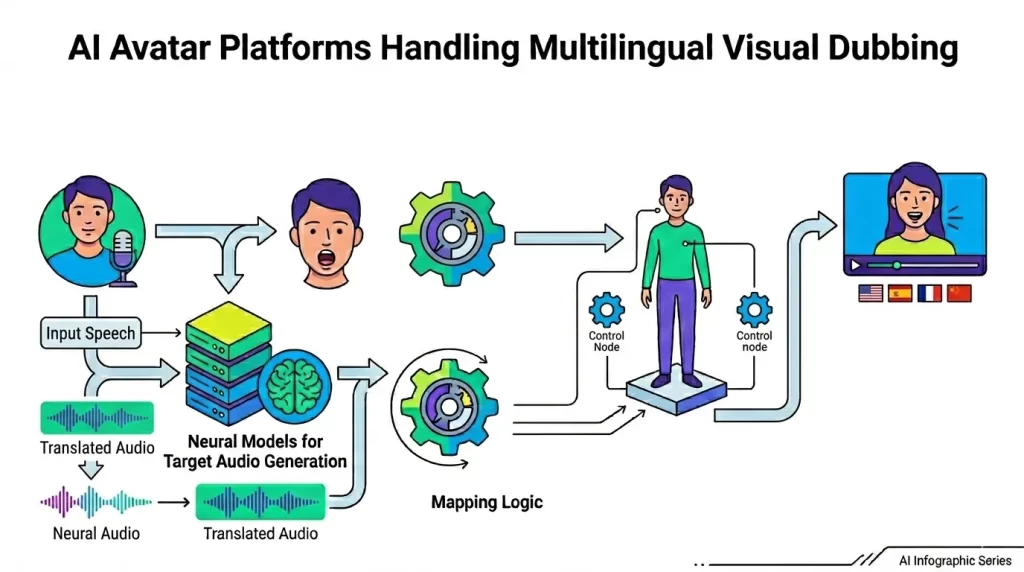

How AI Avatar Platforms Handle Multilingual Visual Dubbing?

AI avatar platforms transcribe and translate the original speech then generate new target language audio using neural voice models that can preserve tone and identity. They analyze the new audio at the phoneme level and reanimate the lips to match each sound precisely.

The Audio Pipeline

1. Speech Recognition and Transcription

The journey begins with understanding what was said in the original video. Automatic Speech Recognition ASR models similar to Whisper or Wav2Vec convert the source audio into text.

Technical Nuance: Modern platforms do not just transcribe words. They capture prosody the rhythm stress and intonation of speech. This emotional and contextual information is crucial for generating natural sounding target audio later.

2. Translation with Contextual Awareness

Once transcribed the text must be translated. But this is not simple word for word substitution. Platforms integrate with specialized Language AI providers like DeepL which understands nuance idiom and context.

Why This Matters: A phrase like “break a leg” translated literally into German would confuse audiences.

DeepL’s partnership with Synthesia enables context aware translation that preserves meaning and tone across 30+ languages. For broader language support platforms like HeyGen offer translation into 175+ languages while Synthesia supports 140+.

3. Target Audio Generation with Emotional Inflection

Now comes the critical step generating speech in the target language that sounds like the original speaker. This goes far beyond basic text to speech.

Neural Voice Cloning: Platforms use neural TTS models trained on the original speaker’s voice to generate target language audio that maintains their vocal identity pitch timbre and speaking style.

Emotional Mapping: Advanced systems analyze the emotional content of the source audio excitement concern authority and map it to the target speech. A passionate sales pitch in English becomes an equally passionate pitch in Japanese not a flat monotone recitation.

The Heart of the Challenge

This is where the real technical magic happens. The generated target audio has different phonemes distinct units of sound than the source. The avatar’s mouth must be completely re animated to match these new sounds.

1. Phoneme Analysis and Viseme Mapping

What are phonemes. Phonemes are the building blocks of spoken language. English has about 44 phonemes like “th” “sh” “ee”. But here is the complication different languages use different phoneme sets. Japanese for example has about 15 consonants and 5 vowels far fewer than English.

The Technical Solution:

The platform passes the target audio through a phoneme recognition model often based on architectures like Wav2Vec or Whisper that identifies each phoneme with precise timestamps.

These phonemes are then mapped to visemes the visual mouth shapes associated with each sound. But because languages differ the mapping is not one to one:

| Source Language (English) | Target Language (Japanese) | Viseme Mapping Challenge |

| “th” sound (e.g., “think”) | No direct equivalent | Map to closest Japanese sound (“s”) |

| “r” vs. “l” distinction | Single sound | Must choose appropriate mouth shape |

| Diphthongs (e.g., “eye”) | Pure vowels | Adjust timing and shape |

2. Solving Coarticulation Across Languages

The Coarticulation Problem: In natural speech mouth shapes do not snap from one position to another. They flow. The shape for “B” influences the following “OO” sound. This blending is called coarticulation and it becomes exponentially more complex when switching languages.

How Platforms Handle It:

Advanced models use LSTM networks or Transformers trained on multilingual speech datasets to predict not just which viseme appears but how the mouth should transition between them. ByteDance’s OmniHuman trained on over 19,000 hours of video data understands these complex relationships across languages and cultural contexts.

3. The “Visual Dubbing” Breakthrough

Traditional dubbing simply added translated audio to existing video creating the infamous “Godzilla movie effect” where mouth movements do not match the words.

Modern platforms perform true visual dubbing. They re animate the face entirely. WaveSpeedAI’s InfiniteTalk built on a 14 billion parameter Diffusion Transformer architecture synthesizes full body motion aligned with new audio. Every syllable triggers not just lip movement but corresponding head turns facial expressions and subtle micro expressions.

Advanced Architectures for Multilingual Excellence

1. The Cascaded Approach

Kuaishou Technology’s Kling Avatar v2 employs a sophisticated two stage cascaded architecture:

- MLLM Director Stage: A multimodal large language model analyzes the audio and produces a “blueprint” video governing high level semantics character motion emotions and expression timing.

- Parallel Generation Stage: Guided by the blueprint keyframes the system generates multiple sub clips in parallel preserving fine grained details while encoding high level intent.

- This architecture delivers enhanced lip synchronization accuracy across multiple languages including Chinese English Japanese and Korean.

2. The Streaming Architecture

Meituan’s InfiniteTalk tackles a fundamental problem in long form multilingual content quality degradation over time.

The Problem: Traditional models generate video in chunks. When re encoding each chunk they introduce cumulative errors color shifts detail loss and eventual identity drift.

The Solution: InfiniteTalk’s streaming generation architecture uses a clever “context frames” mechanism. When generating a new segment the model does not just rely on reference frames. It uses the last few frames of the previous segment as “momentum information”. This ensures seamless transitions and consistent quality even for videos up to 10 minutes long.

3. The Latent Space

The latest advancement comes from Meituan’s LongCat Video Avatar which introduces Cross Chunk Latent Stitching.

The Innovation:

Instead of decoding each video segment to pixels and re encoding for the next segment which causes quality loss LongCat operates entirely in the latent space. It stitches segments together while they are still compressed representations eliminating the decode re encode cycle entirely.

The Result: 5 minute videos approximately 5,000 frames maintain perfect color stability and crisp details essential for professional multilingual content where visual quality must match the source.

Beyond Lips Full Body Multilingual Expression

1. The “Sparse Frame” Paradigm Shift

Traditional video dubbing focused exclusively on mouth regions a local inpainting approach that left bodies stiff and unexpressive. This created an uncanny disconnect passionate audio paired with frozen posture.

The New Paradigm: “Sparse frame video dubbing” fundamentally redefines the task. Instead of repairing mouth regions it is framed as “sparse keyframe guided full body video generation.”

How It Works:

- The system preserves only a few keyframes from the source video as “visual anchors”.

- These anchors lock in identity clothing and setting.

- The model is then free to generate全新 full body movements synchronized to the new audio.

- When the target language conveys excitement the avatar can gesture naturally. When it is solemn posture reflects that tone.

2. Emotional Micro Gestures Across Cultures

Different cultures express emotion differently through body language. An enthusiastic gesture in Italian might differ from its Japanese equivalent.

The Technical Solution: Models like OmniHuman are trained on diverse multilingual datasets that include cultural context. They learn not just what words sound like but how people from different cultures move when speaking those words.

LongCat Video Avatar takes this further with Disentangled Unconditional Guidance which teaches the model that “silence” does not mean “dead”. During speech pauses the avatar naturally blinks adjusts posture or breathes just like a real person would regardless of language.

Quality Assurance and Benchmarks

The industry uses several key metrics to evaluate multilingual dubbing quality:

| Metric | What It Measures | Why It Matters |

| Sync C / Sync D | Lip sync accuracy | Ensures mouth movements match new audio |

| FID Fréchet Inception Distance | Visual quality | Detects artifacts or quality loss |

| FVD Fréchet Video Distance | Temporal consistency | Measures smoothness across frames |

| CSIM | Identity consistency | Confirms the person still looks like themselves |

LongCat Video Avatar achieved state of the art scores across all these metrics on benchmarks like HDTF CelebV HQ and EMTD.

Human Evaluation

Numbers do not tell the whole story. Platforms increasingly rely on human perception tests. In a comprehensive study with 492 participants LongCat Video Avatar outperformed commercial solutions including HeyGen and Kling Avatar 2.0 across entertainment education and daily life scenarios.

Reviewers specifically noted:

- Natural micro expressions during silent periods.

- Stable identity throughout long videos.

- Diverse non repetitive movements.

- Superior performance in both English and Chinese.

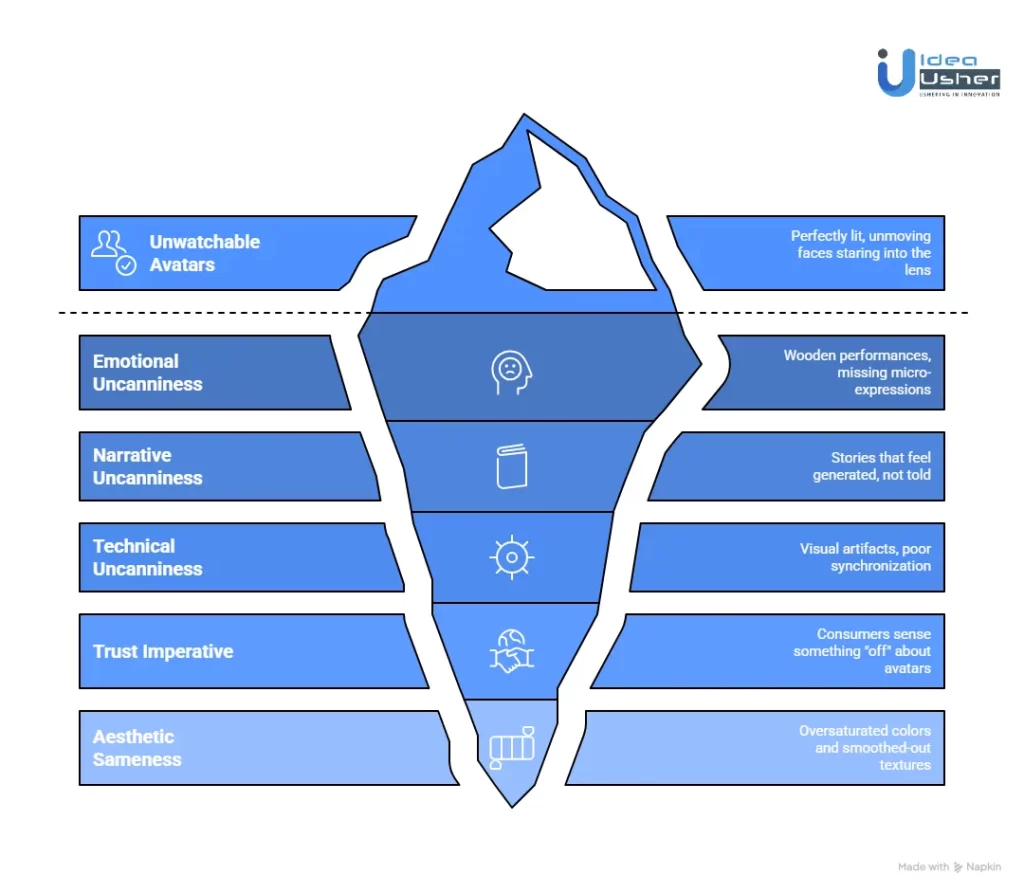

How to Prevent the “Uncanny Valley” Effect in AI Avatar Video Platforms?

We’ve entered a new phase in AI avatar development. The low-hanging fruit basic lip synchronization stable identities photorealistic rendering has been harvested. Today’s challenge is not technical capability. It is emotional authenticity.

The industry has effectively crossed the visual uncanny valley. Modern avatars no longer flicker melt or distort. But they’ve stumbled into what Melville calls the “boredom valley” a state of technical perfection that’s emotionally hollow.

LinkedIn and corporate inboxes are flooded with “perfectly lit, unmoving faces staring directly into the lens, delivering monologues for two minutes straight. It’s efficient. It’s scalable. And it’s unwatchable.”

The Trust Imperative

For businesses this is not just an aesthetic concern. In marketing the uncanny valley directly impacts trust and conversion.

When consumers sense something “off” about an AI avatar their brains flag it as untrustworthy. “If your brand looks like it was generated entirely by a prompt, you risk losing the one thing that drives sales: trust.“

The challenge then is multidimensional. Platforms must solve not only the technical uncanny valley visual artifacts poor synchronization but also the emotional uncanny valley wooden performances missing micro-expressions and the narrative uncanny valley stories that feel generated not told.

Before an avatar can act human it must look human. This section explores the cutting-edge technical approaches that platforms use to eliminate visual uncanniness.

1. Beyond Basic Lip Sync

Traditional audio-driven avatars map speech to mouth movements a necessary but insufficient condition for realism. The missing ingredient is nuanced emotional expression.

The AUHead Breakthrough

Researchers have introduced AUHead a novel two-stage method that disentangles fine-grained emotion control from audio using Action Units AUs. Action Units derived from the Facial Action Coding System FACS represent specific muscle movements like “inner brow raiser” or “lip corner puller.”

How it works:

Stage 1: A large audio-language model analyzes speech using spatial-temporal AU tokenization and an “emotion-then-AU” chain-of-thought mechanism. This captures subtle emotional cues often missed by conventional models.

Stage 2: An AU-driven controllable diffusion model synthesizes talking-head videos conditioned on these AU sequences. The result is “competitive performance in emotional realism, accurate lip synchronization, and visual coherence, significantly surpassing existing techniques.”

2. The 3D Revolution

2D warping approaches have inherent limitations. They cannot handle extreme head angles and they struggle with identity consistency. The solution lies in 3D.

3DXTalker: A Unified Framework

3DXTalker represents a significant leap in expressive 3D talking avatar generation. It unifies four critical dimensions:

- Identity preservation: Scalable identity modeling via 2D-to-3D data curation

- Lip synchronization: Frame-wise amplitude and emotional cues beyond standard speech embeddings

- Emotional expression: Nuanced expression modulation

- Spatial dynamics: Natural head-pose motion with prompt-based stylized control

The key innovation is treating avatars as 3D entities with volumetric consistency not just 2D surfaces being warped. This ensures that when an avatar turns its head the relationship between nose eyes and mouth remains physically plausible.

Instruction-Driven Expression

What if you could simply tell an avatar what emotion to convey. Recent research makes this possible.

I2FET: Instruction to Facial Expression Transition

The Instruction-driven Facial Expression Decomposer IFED module learns the correlation between textual descriptions and facial expression features. The I2FET method then generates smooth expression transitions based on natural language instructions.

Practical application: A creator can type “look skeptical, then gradually become convinced” and the avatar will execute a smooth physiologically plausible transition between these emotional states. This expands the “repertoire of facial expressions and the transitions between them… greatly.”

High-Frame-Rate Capture and Generation

Micro-expressions happen fast typically 40-500 milliseconds. Most consumer AI avatar tools operate at 30-60 fps inherently missing the velocity and asymmetry data critical to micro-expression fidelity. Professional motion capture systems record at 240-1000 fps.

Forward-thinking platforms are bridging this gap through:

- Training on high-frame-rate data to teach models the temporal dynamics of micro-expressions

- Temporal upsampling networks that infer intermediate frames with realistic motion blur

- Physiologically-informed generation that models the actual timing of facial muscle activations

3. The Great Paradox

Here is the counterintuitive truth at the heart of modern AI avatar development. To make something feel authentically human you must make it less technically perfect.

The Problem with Perfect Data

AI models are trained on existing media. And what media have we fed them. A century of cinema advertising and filtered social media an “unreal, hyper-stylized version of ourselves”.

From Botticelli’s impossibly elongated Venus to Hollywood’s three-point lighting we’ve been “running away from ‘real’ as fast as the technology would carry us”.

When we train AI on this curated perfection it “simply spits back the unreal lens we gave it”. The result is technically flawless but fundamentally inauthentic.

Architecting the Imperfect

Colin Melville’s experiment in a London hotel room offers a masterclass in escaping the uncanny valley through intentional imperfection.

His approach:

- Generate images that capture the world as we actually see it not as a lighting director wants us to see it

- Introduce accidental camera shake the kind that happens when a real operator holds a camera

- Layer in rough unpolished audio no studio-perfect sound

- Keep the edit slightly loose not the precise cuts of commercial filmmaking

- Remove the music let silence and ambient sound carry the moment

The result. A sixty-second clip that looked like a genuine camera test. By making the footage “look a bit ‘less perfect,'” the result became highly impressive. It looked “not AI,” which has become “the new battleground”.

The Aesthetic Sameness Trap

AI tools tend to gravitate toward a specific “look” oversaturated colors and smoothed-out textures that industry experts call “aesthetic sameness”. This is the visual signature of generative AI and audiences are learning to recognize it.

The solution: Use AI for generation but always push the output through post-production tools to add grain, lens flares, or real-world textures that break the ‘AI loop‘”. The human thumbprint those small details that feel earned not just calculated is what signals authenticity.

4. Teaching Machines to Act

Technical perfection is table stakes. The real differentiation comes from performance treating the avatar not as software but as “a difficult actor who needed directing”.

From Newsreader to Performer

The default AI avatar performance is the newsreader a static face locked-on eye contact continuous monologue. Real cinema does not work this way. “We rarely hold on a single shot of a talking head for more than a few seconds. We cut away. We use reaction shots. We change the angle. Film theory 101.”

Directing your digital self:

- Start with the story not the tech. Build narratives that require visual dynamism.

- Cut away. Use high-end B-roll to illustrate points and compensate for the avatar’s inability to improvise.

- Play with pacing. Allow for pauses that feel natural rather than algorithmic.

- Use sound design to underscore emotional beats the avatar’s face might miss.

The Micro-Expression Gap

A 2023 study published in ACM Transactions on Graphics compared AI-generated and motion-captured micro-expressions. FACS-certified coders identified 92% of studio-captured micro-expressions with high reliability. AI-generated equivalents achieved only 41% identification accuracy.

AI models “lack true causal modeling of emotion: they map pixel patterns to output frames, not psychological states to neuromuscular sequences”. A model may generate a convincing “sad blink,” but it does not know that a 300ms blink duration paired with downward eye movement signifies grief not fatigue.

The hybrid solution: Forward-thinking studios now blend AI and motion capture:

- Pre-visualization with AI to block scenes and test pacing

- Targeted mocap capture for emotionally complex sequences requiring micro-expression nuance

- AI-assisted retargeting to adapt realistic performances to stylized avatars while preserving timing and asymmetry

- FACS-guided QA using automated analyzers to flag deviations in Action Unit timing

The Silence Problem

One of the most telling signs of an AI avatar is what happens when it stops speaking. Traditional models treat silence as “dead” the avatar freezes unnaturally.

Advanced systems like LongCat-Video-Avatar address this with Disentangled Unconditional Guidance which teaches the model that silence doesn’t mean dead. During speech pauses the avatar naturally blinks adjusts posture or breathes just like a real person would.

5. Cinematic Context

An avatar does not exist in a vacuum. The environment the cinematography and the editing all contribute to or detract from its perceived humanity.

The Camera’s Eye vs. The Human Eye

“There is a fundamental difference between how a camera sees and how a human sees,” Melville observes. AI models trained on cinematic footage learn the stylized movement of cameras overhead shots tracking shots smooth cuts. But we do not experience the world this way. “We walk, our vision bouncing with every step.”

The solution: Choose lenses closer to human field of vision (50mm) and frame shots that feel “less perfect and more observational”.

The Hybrid Approach

The most effective marketing is not 100% AI. Leading brands use generative video to enhance real-world footage a real person in a digitally enhanced environment. This hybrid approach “keeps your brand grounded in reality while utilizing the speed of AI.”

The “Gut Check” Filter

Before publishing ask: “Does this evoke an emotion or just show a high-res image?” AI is “great at generating pixels, but it’s terrible at understanding ‘cringe.'” Human designers must vet every frame to ensure the messaging feels earned and the tone aligns with brand voice.

Top 5 AI Avatar Video Generation Platforms

We have carefully researched the market and identified several AI avatar video generation platforms that offer distinct technical capabilities and scalable workflows. Each of these platforms can efficiently generate studio quality avatar videos using advanced neural rendering and speech synthesis models.

1. Synthesia

Synthesia is one of the most widely adopted AI avatar video platforms in the U.S. enterprise market. It allows businesses to convert scripts into professional videos using realistic AI avatars and multilingual voiceovers. The platform is heavily used for corporate training, compliance modules, onboarding, and internal communications because of its strong security, scalability, and brand customization features.

2. HeyGen

HeyGen is known for its highly realistic avatars and strong lip sync accuracy. It enables users to generate videos from text, clone voices, and even create personalized avatar versions of themselves. The platform is popular among marketers, educators, and content creators due to its ease of use and fast video production workflow.

3. D-ID

D-ID specializes in transforming photos into talking AI avatars using advanced facial animation technology. It is frequently used for personalized marketing videos, storytelling, customer engagement, and interactive experiences. The platform also supports voice cloning and multilingual content, making it flexible for global communication strategies.

4. Colossyan

Colossyan focuses primarily on AI-generated training and educational videos. Businesses can convert documents, slides, or scripts into structured learning modules with AI presenters. It is especially popular in HR departments, corporate L&D teams, and compliance training environments where consistent and scalable video content is required.

5. Vidyard

Vidyard integrates AI avatars into its broader video marketing and sales enablement ecosystem. It allows professionals to create personalized avatar videos based on their own likeness for outreach, demos, and internal communication. The platform is widely used by sales teams and marketing departments aiming to scale personalized video engagement efficiently.

Conclusion

Building an AI avatar video generation platform is not just about text to speech and templates, it requires a structured multimodal AI stack, temporal stabilization, scalable cloud infrastructure, and compliance by design. You should treat it as programmable visual communication infrastructure rather than simple content production. When built correctly it can gradually evolve into a scalable AI driven media ecosystem that supports long term growth.

Looking to Develop an AI Avatar Video Generation Platform?

IdeaUsher can help you to develop an AI avatar video generation platform by architecting a multimodal AI stack that combines neural speech synthesis, facial animation, and scalable GPU infrastructure. Our team can design temporal stabilization and real time rendering pipelines so the system performs reliably in production

With 500,000+ hours of coding experience, our ex-MAANG and FAANG engineers architect scalable AI infrastructure that powers next generation digital interaction.

What we deliver:

- Real-time interactive avatars with <200ms latency

- Enterprise-grade security with on-prem/VPC deployment

- Custom model training for your proprietary “Digital Twins”

- Scalable batch generation for 10,000+ personalized videos

- Deep CRM/API integration for agentic workflows

Check out our latest projects to witness the kind of high-performance platforms we build for forward-thinking companies like yours.

Work with Ex-MAANG developers to build next-gen apps schedule your consultation now

FAQs

A1: Development begins with designing a structured multimodal AI stack that integrates text generation, neural speech synthesis, facial animation models, and temporal stabilization pipelines. The architecture must include GPU orchestration, rendering engines, storage layers, and consent management systems. The platform should be treated as programmable visual communication infrastructure rather than a simple content production tool.

A2: Core features include script to video generation, neural voice cloning, precise lip sync alignment, emotion modulation, avatar customization, and API based automation. Advanced platforms support multilingual synthesis, real time rendering, analytics dashboards, and embedded content provenance mechanisms. These features operate on coordinated AI pipelines instead of static template logic.

A3: The cost depends on model complexity, GPU infrastructure, latency targets, and compliance requirements. Production grade systems require investment in multimodal model training, inference optimization, and scalable cloud deployment. Expenses increase significantly when real time interactivity and enterprise security controls are included.

A4: The platform converts structured text into synthetic speech and maps phonemes to facial motion using neural rendering models that maintain temporal consistency. An orchestration layer manages inference workloads, storage, and delivery across distributed cloud infrastructure. When engineered correctly the system evolves into a scalable AI driven media ecosystem that supports long term growth.